Deepfake Statistics [2026]: Growth, Fraud & Detection Data

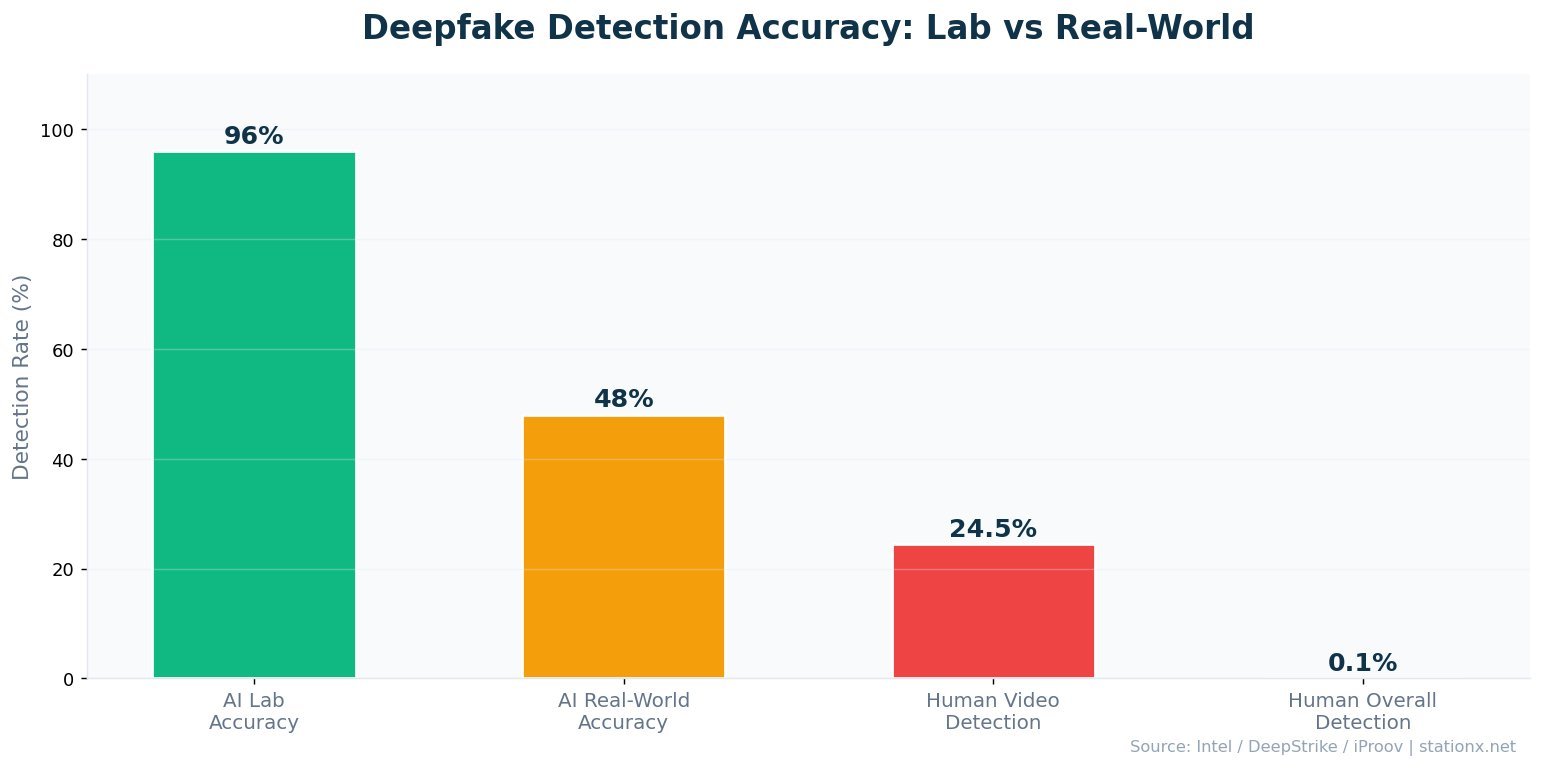

8 million deepfakes now circulate online, up from 500,000 just two years ago (DeepStrike). Deepfake-enabled fraud surged 3,000% in North America in a single year (Onfido), and only 0.1% of people can reliably spot an AI-generated fake (iProov). A single deepfake video call produced the largest known social engineering loss in history when attackers impersonated multiple colleagues simultaneously on a live call (CrowdStrike).

You will find 100+ deepfake statistics across 13 categories below -- from voice cloning and deepfake video detection to financial fraud, corporate impersonation, and legislation -- sourced from iProov, CrowdStrike, McAfee, Deloitte, WEF, and 30+ authoritative reports. Each section includes original analysis cross-referencing multiple sources to surface insights you will not find in any single report.

Key Takeaways

- 8 million deepfakes circulate online -- a 16x increase in two years

- Deepfake fraud grew 3,000% in North America and now accounts for 6.5% of all fraud

- A single deepfake video call cost Arup $25.6 million (CrowdStrike)

- Voice clones need just 3 seconds of audio for an 85% match (McAfee)

- Only 0.1% of people can reliably identify a deepfake (iProov)

- AI detection tools achieve 96% accuracy but drop 45-50% in real-world conditions

- 80% of companies have no deepfake response plan (Keepnet)

- 47 US states have enacted deepfake legislation since 2022

- The deepfake detection market is projected to reach $15.7 billion by 2026 (Deloitte)

Last updated: May 2026

📊 Headline Deepfake Numbers

The scale of deepfake content has exploded. An estimated 8 million deepfakes circulate online in 2026, up from 500,000 in 2023 (DeepStrike). That is a 16x increase in two years. Deepfake content is growing at approximately 900% annually, driven by accessible AI tools that require minimal technical skill.

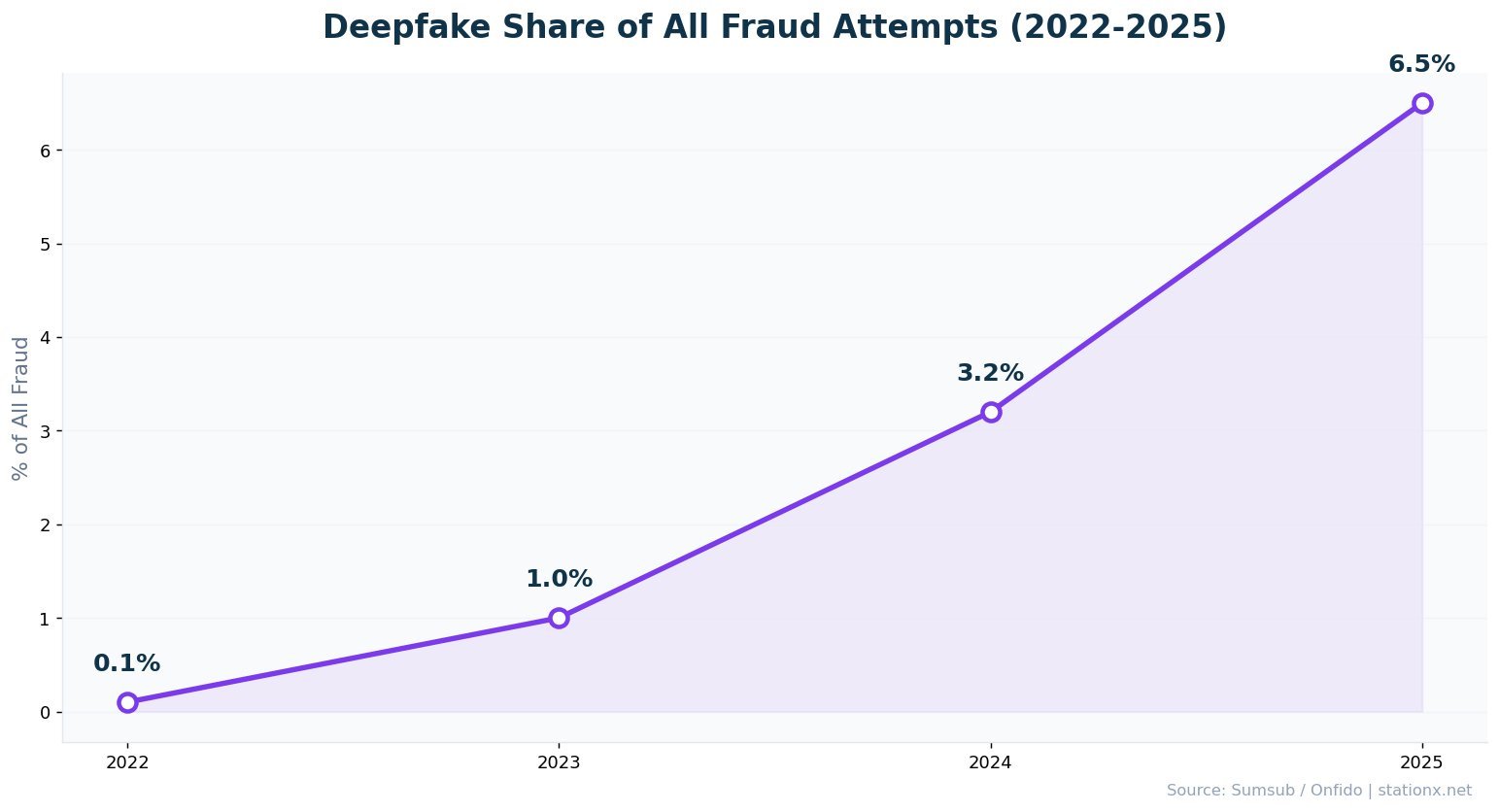

Deepfake fraud now accounts for 6.5% of all fraud attempts globally, up from 0.1% in 2022 -- a 2,137% increase (Sumsub). Financial losses from deepfake scams exceeded $200 million in Q1 2025 alone (DeepStrike). In the US, those losses reached $1.1 billion in 2025 (Keepnet).

The largest single deepfake scam used real-time video call impersonation of multiple colleagues simultaneously -- a technique detailed in Section 6 (CrowdStrike). CEO deepfake fraud now targets approximately 400 companies per day (BrightSide AI).

| Finding | Value | Source |

|---|---|---|

| Deepfakes circulating online | 8 million | DeepStrike |

| Deepfake fraud increase (North America) | 3,000% | Onfido 2024 Identity Fraud Report |

| People who can detect deepfakes | 0.1% | iProov Deepfake Blindspot Study 2025 |

| Largest single deepfake scam | $25.6M | CrowdStrike 2025 Global Threat Report |

| Projected GenAI fraud losses by 2027 | $40B | Deloitte Center for Financial Services |

| Audio needed to clone a voice | 3 seconds | McAfee |

| Deepfakes as share of all fraud | 6.5% | Sumsub |

| Deepfake fraud surge (2024) | 1,300% | Pindrop 2025 Voice Intelligence & Security Report |

| US deepfake fraud losses (2025) | $1.1B | Keepnet Labs |

| C-suite unprepared for deepfakes | 28% | WEF Global Cybersecurity Outlook 2025 |

The threat landscape around AI deepfake technology has shifted from theoretical to operational. Two years ago, deepfake statistics showed a niche problem affecting a handful of public figures. Today, the data shows an industrialised attack vector that targets individuals and corporations alike. Deepfake cybersecurity is no longer optional -- it is a boardroom priority.

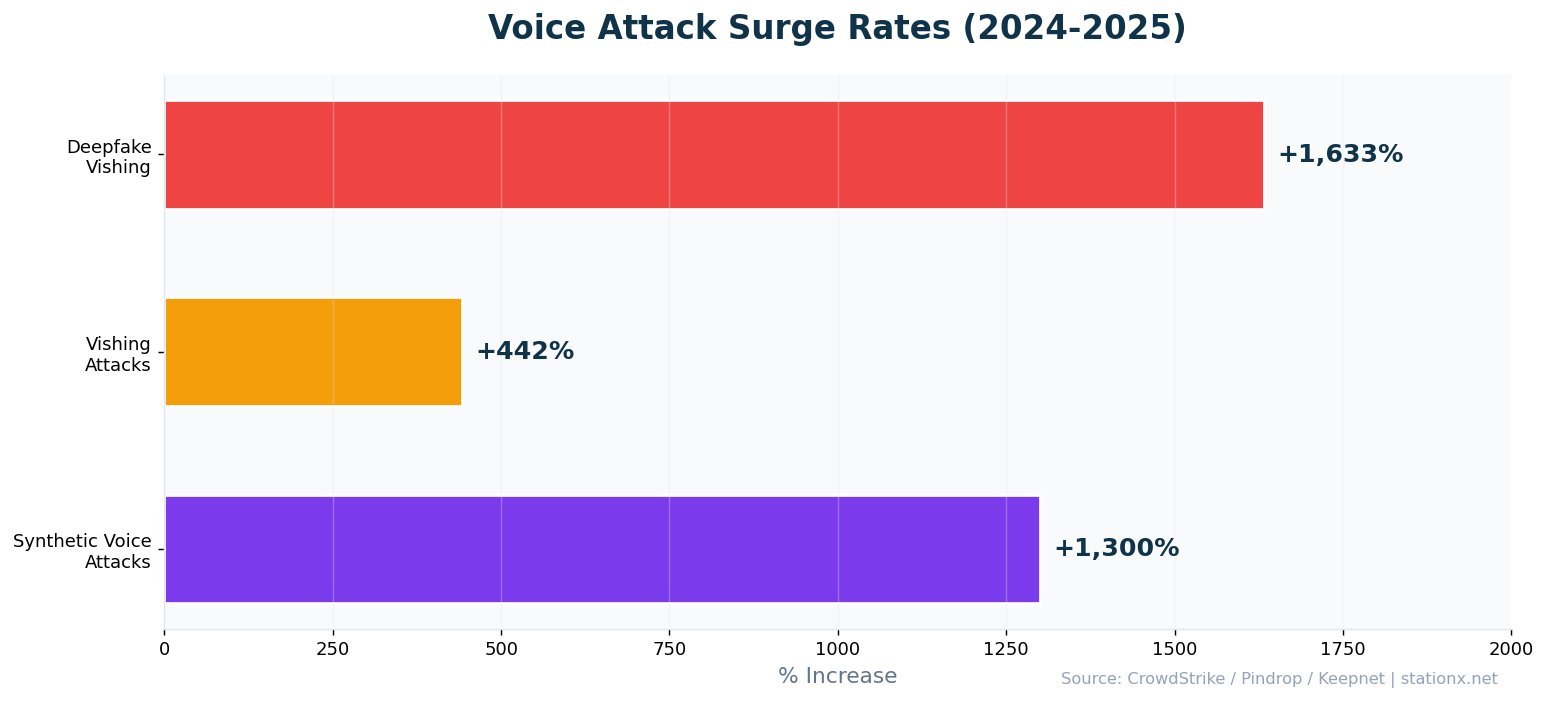

The numbers paint a clear trajectory. Per-incident losses from deepfake attacks exceed almost every other social engineering vector. Cloned-voice vishing surged 1,633% in a single quarter. Humans detect fake videos less than 25% of the time. And the best AI detection tools, while reaching 96% accuracy in the lab, drop 45-50% when deployed in real-world conditions.

Nathan House's Analysis: The 3-Second Voice Clone Changes Everything

Cross-referencing McAfee's voice cloning data with CrowdStrike's vishing surge numbers reveals a step-change in social engineering. It takes 3 seconds of audio to clone a voice with 85% accuracy. Vishing attacks surged 442% in the second half of 2024 and another 1,633% in Q1 2025. This is not gradual growth -- it is an inflection point. The Arup incident (detailed in Section 6) used real-time video deepfakes of multiple colleagues. Compare that to the average BEC loss of $137,000 (FBI IC3). Deepfakes have increased the potential single-incident loss by 187x.

📈 Deepfake Growth & Volume

500,000 deepfakes existed online in 2023. By 2025, that number reached 8 million (DeepStrike). The annual growth rate sits at approximately 900%, fuelled by open-source AI models and commercial deepfake-as-a-service platforms. First-quarter 2025 alone produced 19% more deepfake incidents than the entire year of 2024 (Keepnet).

The share of deepfakes in global fraud attempts grew from 0.1% in 2022 to 6.5% in 2025 -- a 2,137% increase (Sumsub). Detection systems identified a 4x increase in deepfake attempts between 2023 and 2024, with deepfakes now accounting for 7% of all fraud attempts. North America saw a 3,000% increase in deepfake fraud in a single year (Onfido).

| Finding | Value | Source |

|---|---|---|

| Estimated deepfakes online (up from 500K in 2023) | 8 million | DeepStrike |

| Annual growth rate in deepfake content | 900% | DeepStrike |

| Increase in deepfake fraud since 2022 | 2,137% | Sumsub |

| Deepfakes detected increase 2023-2024 | 4x | Sumsub |

| Deepfakes as share of all fraud attempts | 6.5% | Sumsub |

| Q1 2025 incidents vs all of 2024 | 19% | Keepnet Labs |

| Deepfake fraud increase in North America (2023) | 3,000% | Onfido 2024 Identity Fraud Report |

| Online deepfakes that are non-consensual porn | 98% | Sensity AI / DeepStrike |

From Novelty to Industrialised Threat

The growth curve matters more than the absolute number. At 900% annual growth, the 8 million deepfakes online today become 80 million within 12 months. Cross-referencing Sumsub's fraud data (deepfakes now 6.5% of all fraud) with Onfido's North America numbers (3,000% increase), deepfakes are transitioning from a rare edge case to a mainstream attack vector. The tools are free, the barrier to entry is zero, and the financial returns are proven.

98% of all deepfake videos are non-consensual pornography (Sensity AI). This remains the dominant category by volume, though financial fraud deepfakes are growing fastest. The distinction matters for regulation: pornographic deepfakes target individuals, while fraud deepfakes target organisations. Both require different defensive approaches.

The deepfake statistics for 2026 show that accessibility is the primary growth driver. Open-source AI models like Stable Diffusion, combined with commercial deepfake-as-a-service platforms, have eliminated the technical barrier. Creating a convincing AI deepfake no longer requires machine learning expertise. A consumer-grade laptop and a few minutes of tutorial videos are sufficient. This democratisation of deepfake creation is why volume is growing at 900% annually.

Deepfake Growth Timeline

First widely-known deepfakes appear on Reddit. The term "deepfake" is coined. Content is crude and easily detected.

14,678 deepfake videos detected online. First deepfake voice scam steals $243,000 from a UK energy firm. Non-consensual pornography dominates.

Deepfakes account for 0.1% of all fraud attempts (Sumsub). Open-source generation tools proliferate. Quality improves dramatically with diffusion models.

500,000 deepfakes online. 3,000% fraud increase in North America (Onfido). Real-time deepfake video generation becomes commercially available.

Largest single deepfake scam hits Arup via multi-participant video call. 1,300% synthetic voice attack surge (Pindrop). 38 countries face election deepfakes. 4x detection increase (Sumsub).

8 million deepfakes online. 6.5% of all fraud. $1.1B US losses. 1,633% vishing surge. 47 US states legislate. Detection market reaches $15.7B.

How common are deepfakes in everyday digital interactions? The data suggests they are far more prevalent than most people realise. With 8 million deepfakes online and only 0.1% of humans able to detect them, the vast majority of encounters go unnoticed. Social media platforms report struggling to identify and remove synthetic content at scale. The gap between creation speed and detection speed continues to widen.

💰 Deepfake Fraud & Financial Impact

The Arup Hong Kong incident in 2024 set the benchmark at $25.6 million lost from a single deepfake video call (CrowdStrike). The full anatomy of the attack -- how multiple AI-generated participants deceived a finance team member across 15 transactions -- is detailed in Section 6. That single incident exceeds what most ransomware gangs extract in a year.

Deepfake fraud losses in the US alone reached $1.1 billion in 2025, tripling from $360 million the year before (Keepnet). Globally, Q1 2025 losses exceeded $200 million (DeepStrike). Deloitte projects GenAI-enabled fraud losses will reach $40 billion in the US by 2027, growing at a 32% compound annual growth rate from $12.3 billion in 2023.

| Finding | Value | Source |

|---|---|---|

| Deepfake fraud losses Q1 2025 | $200M+ | DeepStrike |

| US deepfake fraud losses (2025) | $1.1B | Keepnet Labs |

| Projected US GenAI fraud losses by 2027 | $40B | Deloitte Center for Financial Services |

| GenAI fraud CAGR (2023-2027) | 32% | Deloitte |

| Deepfake fraud surge in 2024 | 1,300% | Pindrop 2025 Voice Intelligence & Security Report |

| Voice clone targets who lost money | 77% | ScamWatch HQ |

| Companies targeted by CEO deepfake fraud daily | 400 | BrightSide AI |

Deepfake Fraud Scale

- Avg enterprise loss per attack: $680K

- US annual losses: $1.1B (2025)

- Q1 2025 global losses: $200M+

- Projected 2027: $40B (US only)

Traditional BEC Comparison

- Average BEC loss: $137K (FBI IC3)

- FBI total BEC losses: $2.9B (2024)

- CEO fraud targets: 400 companies/day

- 77% of targets lose money

Nathan House's Analysis: Deepfakes Are 187x More Costly Than Traditional BEC

The largest single deepfake scam (see hero number above) dwarfs the FBI IC3 average BEC loss of approximately $137,000 per incident -- a 187x difference. Even comparing the Arup incident to the median BEC wire transfer, deepfakes have shifted the ceiling on what a single social engineering attack can extract. The 32% CAGR from $12.3 billion (2023) to $40 billion (2027) suggests we are still in the early innings of deepfake-enabled financial fraud.

The Trajectory of Deepfake Fraud Losses

The financial trajectory of deepfake fraud follows a clear pattern. In 2022, deepfakes accounted for 0.1% of all fraud (Sumsub). By 2025, that share reached 6.5% -- a 2,137% increase. US-specific losses tripled from $360 million in 2024 to $1.1 billion in 2025 (Keepnet). Deloitte's projection of $40 billion by 2027 represents a 32% CAGR from the $12.3 billion baseline in 2023. These are not speculative estimates. They extrapolate from measured year-over-year data.

The deepfake fraud statistics paint a picture of a threat vector that is maturing rapidly. Early deepfake attacks were crude and targeted unsophisticated victims. Current attacks target corporate finance teams with real-time video conferencing deepfakes. The next evolution -- AI agents conducting multi-step fraud operations autonomously -- is already being discussed in threat intelligence circles. The Arup incident (detailed in Section 6) may be the opening chapter, not the climax.

CEO deepfake fraud now targets 400 companies daily (BrightSide AI). 77% of voice clone targets who were reached lost money (ScamWatch). The average deepfake fraud incident exceeds $500,000 in losses, with large enterprises losing an average of $680,000 per attack. These are the deepfake fraud statistics that CFOs need to see when evaluating cybersecurity investment.

🎙️ Voice Cloning & Vishing Statistics

Three seconds. That is all the audio needed to create an 85% accurate voice clone (McAfee). With just a short voicemail, social media clip, or conference call recording, attackers can generate a convincing replica of any voice. 25% of adults have already encountered an AI voice scam (McAfee), and that number is climbing fast.

Vishing attacks surged 442% between the first and second halves of 2024 (CrowdStrike/Hoxhunt). Deepfake-enabled vishing then surged another 1,633% in Q1 2025 compared to Q4 2024 (CrowdStrike/Keepnet). Pindrop recorded a 1,300% jump in synthetic voice attacks during 2024. 70% of organisations report being targeted by vishing attacks (Keepnet).

The financial conversion rate is alarming. 77% of voice clone fraud targets who were reached actually lost money (ScamWatch). Voice cloning technology has crossed what Fortune magazine calls the "indistinguishable threshold" -- the average listener cannot reliably distinguish a cloned voice from a real one.

Where Attackers Get Voice Samples

The 3-second voice clone threshold means any recorded speech is a potential attack asset. Common sources include: corporate earnings calls (publicly streamed and archived), conference presentations (recorded and posted on YouTube), podcast appearances, LinkedIn video posts, voicemail greetings, and social media content. Executives and public-facing employees generate the most accessible voice data, but internal meeting recordings and training videos also provide material if an attacker gains partial network access.

Voice Cloning Statistics: Attack Patterns

Voice cloning attacks follow a consistent pattern. The attacker identifies a target organisation and its key personnel. They gather voice samples from publicly available sources. Using commercial or open-source AI tools, they generate a voice clone in minutes. The cloned voice is then used in a vishing call -- typically impersonating a C-level executive requesting an urgent financial transfer. The 442% vishing surge (CrowdStrike/Hoxhunt) and 1,300% synthetic voice attack increase (Pindrop) confirm this pattern is scaling rapidly.

70% of organisations report being targeted by vishing attacks (Keepnet). 2.56 billion scam phone calls were reported in 2025 (Truecaller). Not all of these use voice cloning, but the AI-enabled subset is the fastest-growing category. The convergence of voice cloning statistics and vishing attack data points to a threat that will define deepfake cybersecurity strategy for the next several years.

| Finding | Value | Source |

|---|---|---|

| Audio needed for 85% voice match clone | 3 seconds | McAfee |

| Adults who have experienced AI voice scam | 25% | McAfee |

| Synthetic voice attack surge (2024) | 1,300% | Pindrop 2025 Voice Intelligence & Security Report |

| Vishing attack surge H1 vs H2 2024 | 442% | CrowdStrike / Hoxhunt |

| Deepfake vishing surge Q1 2025 vs Q4 2024 | 1,633% | CrowdStrike / Keepnet |

| Voice phishing increase YoY | 442% | CrowdStrike 2025 Global Threat Report |

| Voice clone targets who confirmed financial loss | 77% | ScamWatch HQ |

| Users fooled by deepfake voices | 25% | Keepnet Labs |

| Organizations targeted by vishing attacks | 70% | Keepnet Labs |

| Scam phone calls reported (2025) | 2.56 billion | Truecaller / Programs.com |

Nathan House's Analysis: Voice Cloning Makes Every Executive a Target

Cross-referencing McAfee's 3-second clone threshold with CrowdStrike's 442% vishing surge and the 77% victim conversion rate produces a concerning equation. Any executive who has ever spoken on a recorded call, podcast, webinar, or YouTube video has provided enough audio for a convincing clone. The old social engineering playbook required research and preparation. Voice cloning reduces attack preparation time from hours to seconds. The 1,633% Q1 2025 surge is the market signal that attackers have figured this out at scale.

Voice Clone Risk Comparison

Select a scenario to see how much audio an attacker needs and how to detect cloned voices.

🎬 Deepfake Video Statistics

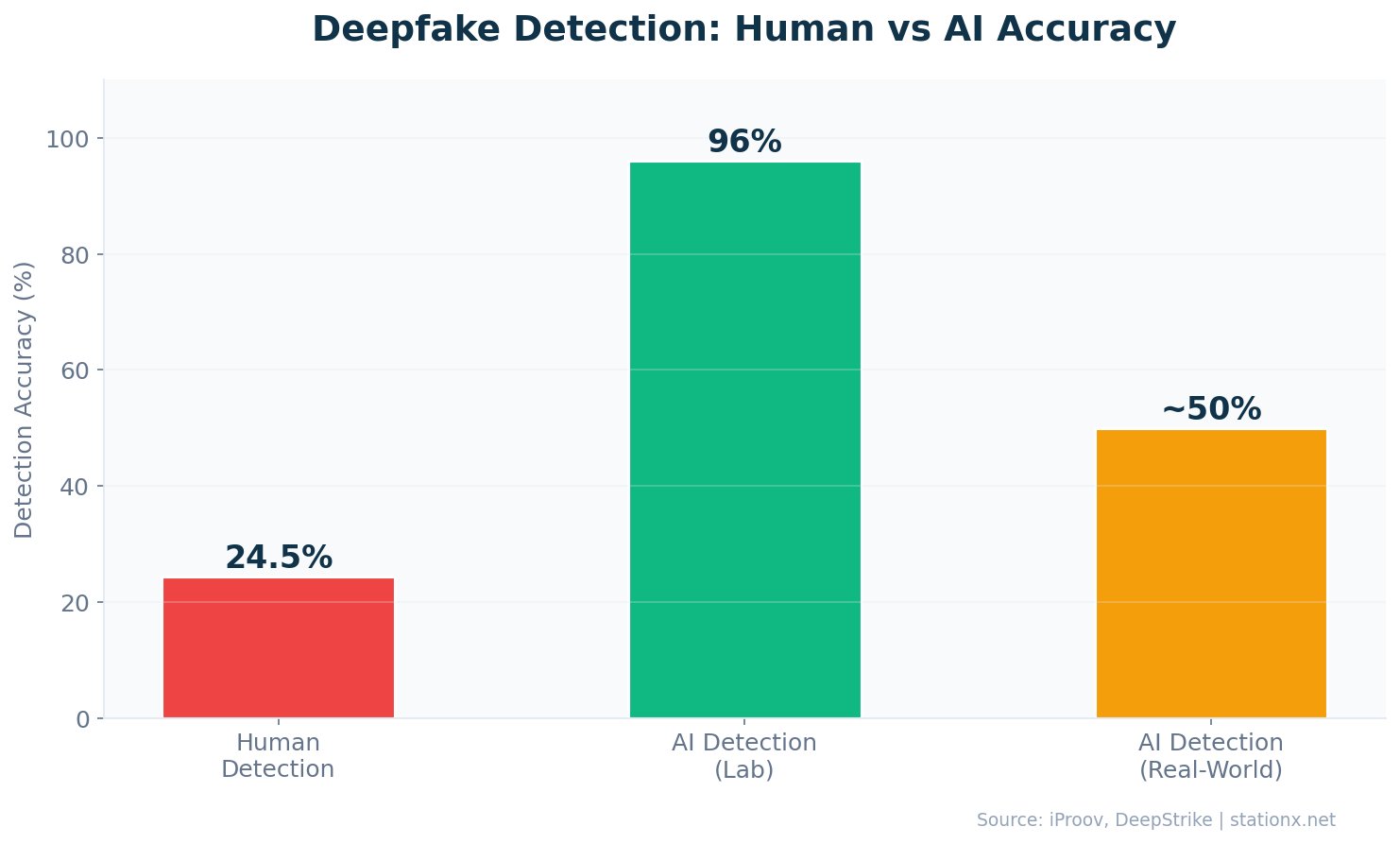

24.5% -- that is the rate at which humans correctly identify high-quality deepfake videos (DeepStrike/iProov). Three out of four deepfake videos pass undetected by the human eye. The broader iProov study found that only 0.1% of people can reliably identify AI-generated deepfakes across multiple tests (iProov 2025). The gap between what AI can generate and what humans can detect has never been wider.

98% of all deepfake videos are non-consensual pornography, and 99-100% of victims are women (Sensity AI). This makes deepfake pornography by far the largest category of deepfake content. Major deepfake pornography sites have catalogued nearly 4,000 female celebrities, alongside countless private individuals. The volume of deepfake porn produced in 2023 was 464% higher than in 2022.

Deepfake Video Quality & Generation Speed

Deepfake video quality has improved dramatically. Early deepfakes exhibited obvious artifacts -- blurring around the hairline, mismatched lighting, unnatural eye movements. Current generation models produce output that passes human inspection 75.5% of the time (DeepStrike). Real-time deepfake video generation -- the technology used in the Arup scam -- enables live video calls where every participant can be AI-generated. This capability was previously limited to well-resourced nation-state actors. It is now available through commercial tools.

Deepfake video statistics for 2026 also show a shift in target demographics. While non-consensual pornography remains the volume leader at 98%, corporate fraud deepfakes are growing at the fastest rate. Election-related deepfakes affected 38 countries but had less impact than feared in mature democracies (Knight Columbia). The most financially damaging deepfake video category is corporate impersonation -- video calls, pre-recorded executive messages, and fake employee onboarding videos used in identity fraud.

| Finding | Value | Source |

|---|---|---|

| Humans who correctly identify deepfake videos | 24.5% | DeepStrike / iProov |

| Deepfake videos that are non-consensual porn | 98% | Sensity AI / DeepStrike |

| Estimated deepfakes circulating online | 8 million | DeepStrike |

| Detection accuracy drop in real-world conditions | 45-50% | DeepStrike |

| People who can accurately detect deepfakes | 0.1% | iProov Deepfake Blindspot Study 2025 |

The Detection Gap Is Getting Worse, Not Better

AI detection tools claim 90-96% accuracy in laboratory settings (Intel FakeCatcher achieves 96%). But real-world effectiveness drops 45-50% (DeepStrike). That means a tool achieving 96% accuracy in the lab may perform at just 48% in production. Meanwhile, generation quality improves with every model release. The 0.1% human detection rate (iProov) means we cannot rely on people either. This is a detection arms race that defenders are currently losing.

🏢 Deepfake Attacks on Business

CEO fraud powered by deepfakes now targets at least 400 companies per day (BrightSide AI). The attack pattern has evolved beyond email-only BEC to include real-time video calls where multiple participants are AI-generated. The Arup Hong Kong case demonstrated this: the target recognised several colleagues on the video call. All were deepfakes (CrowdStrike).

80% of companies have no established protocols or response plans for deepfake-based attacks (Keepnet). This leaves organisations relying on individual employee judgement -- which iProov's data shows is effective only 0.1% of the time. Traditional BEC losses remain substantial at $2.9 billion in FBI IC3 reports for 2024, but deepfake-enhanced attacks command significantly higher per-incident losses.

The Arup Incident: Anatomy of a $25.6M Deepfake Scam

The Arup Hong Kong case is the most detailed public example of a corporate deepfake attack. In 2024, a finance team employee received an email from what appeared to be the UK-based CFO. The employee then joined a video conference call. On the call were the CFO and several other colleagues -- all of whom the employee recognised. The conversation appeared normal, and the CFO instructed the employee to make several urgent payments.

Every person on that video call was an AI deepfake. Attackers had downloaded publicly available videos of the colleagues and used AI to generate real-time deepfake avatars with synthesised voices. The employee transferred HK$200 million (US$25.6 million) across 15 transactions before the fraud was discovered. Hong Kong police assessed that the entire attack was orchestrated using deepfake technology derived from publicly available footage.

How Deepfake Attacks on Business Differ from Traditional BEC

Traditional BEC relies on email impersonation. The attacker spoofs an email address or compromises an inbox. Deepfake BEC adds video and voice impersonation. The target sees and hears their colleagues in real-time. The psychological impact is fundamentally different -- email scepticism is common, but questioning a live video call feels socially unacceptable. This is why deepfake attacks achieve higher per-incident losses than email-only BEC.

Warning Signs of a Deepfake Business Attack

Red Flags That May Indicate a Deepfake

- Unusual urgency combined with a request to bypass normal approval processes

- Video call participants with limited facial movement range or fixed backgrounds

- Audio that sounds slightly "flat" or lacks natural ambient room noise

- Requests that come outside normal business hours or via unusual channels

- Reluctance from the "caller" to answer unexpected or personal questions

- Slight lip-sync mismatches, especially during fast speech

- Conversations that feel scripted or lack natural back-and-forth spontaneity

| Finding | Value | Source |

|---|---|---|

| Companies targeted by CEO deepfake fraud per day | 400 | BrightSide AI |

| Companies with no deepfake response plan | 80% | Keepnet Labs |

| FBI BEC losses (2024) | $2.77B | FBI Internet Crime Report 2024 |

| Average BEC attack cost | $4.67M | IBM / HoxHunt |

| BEC share of financially motivated breaches | 58% | Verizon DBIR 2025 |

| Targeted victims who lost money | 77% | ScamWatch HQ |

| Deepfakes as attacker method in breaches | 35% | IBM Cost of a Data Breach Report 2025 |

Deepfake Risk Assessment

Answer 5 questions to assess your organisation's exposure to deepfake attacks.

1. Does your organisation have a deepfake response plan?

2. Do you use callback verification for financial requests?

3. Are executives' voices publicly available (podcasts, YouTube, etc.)?

4. Do you have multi-person approval for large transfers?

5. Has your team received deepfake awareness training?

Nathan House's Analysis: The Corporate Response Gap

80% of companies have no deepfake response plan. 28% of C-suite cyber leaders say deepfakes are what they are least prepared for (WEF). Yet CEO deepfake fraud targets 400 companies daily. The mismatch between threat velocity and organisational preparedness is stark. Companies that had verification protocols -- callback procedures, code words, multi-channel confirmation -- would have caught the Arup scam. That loss was a process failure as much as a technology failure.

🛡️ Deepfake Detection & Defence

Only 0.1% of people can accurately detect AI-generated deepfakes across multiple tests (iProov 2025). When tested specifically on video deepfakes, the human detection rate rises to 24.5% -- still meaning three out of four fakes pass unnoticed (DeepStrike). The technology is outpacing human perception.

AI-based detection tools perform better, with Intel's FakeCatcher achieving 96% accuracy by analysing blood flow patterns in video (Intel). But real-world performance drops 45-50% compared to laboratory conditions (DeepStrike). Multimodal detection systems -- analysing visual, audio, and metadata signals simultaneously -- represent the most promising approach, but even these struggle with the latest generation models.

Why Lab Accuracy Does Not Equal Real-World Performance

The 45-50% accuracy drop between lab and real-world conditions (DeepStrike) deserves examination. Lab tests use high-quality, uncompressed video and audio. Real-world content has been compressed, re-encoded, streamed, and potentially screen-recorded. Each of these transformations strips the subtle artifacts that detection tools rely on. A deepfake shared via WhatsApp or Zoom has been compressed multiple times, removing the micro-level inconsistencies that AI detectors look for.

This explains why deepfake detection remains fundamentally harder than deepfake creation. Generation tools produce high-quality output that degrades gracefully through compression. Detection tools require high-quality input that compression degrades catastrophically. The deepfake detection market is growing at 42% annually to address this gap, but the underlying physics of compression and signal degradation mean detection will likely always lag generation.

Process-Based Defence: What Actually Works

Given the limitations of both human detection (0.1%) and AI detection (45-50% accuracy drop in production), process-based defences offer the most reliable protection. Callback verification -- independently dialling a known number to confirm a request -- would have prevented the Arup scam. Multi-person approval for large transfers adds a second verification layer. Code words shared out-of-band provide a challenge-response mechanism that deepfakes cannot reproduce. These process controls do not depend on detecting the deepfake itself. They make the attack irrelevant.

Deepfake Detection Approaches Compared

| Approach | Accuracy | Pros | Cons |

|---|---|---|---|

| Human Visual | 0.1-24.5% | No cost, no tech needed | Ineffective against quality deepfakes |

| AI Single-Modal | 70-90% | Good for specific media types | Bypassed by new generation models |

| AI Multi-Modal | 90-96% | Best automated approach | 45-50% drop in real-world |

| Process Controls | ~99% | Does not depend on detecting the fake | Requires organisational discipline |

| Layered (AI + Process) | 99%+ | Defence in depth, resilient | Higher implementation cost |

The comparison table above highlights a counterintuitive finding from the deepfake detection data: the most reliable defence is not better detection technology, but better verification processes. Process controls achieve near-perfect effectiveness because they sidestep the detection problem entirely. A callback to a verified number confirms the request regardless of how convincing the deepfake appears. Layering AI detection on top of process controls creates defence in depth.

| Finding | Value | Source |

|---|---|---|

| People who can accurately detect deepfakes | 0.1% | iProov Deepfake Blindspot Study 2025 |

| Human detection rate for deepfake videos | 24.5% | DeepStrike / iProov |

| Intel FakeCatcher detection accuracy | 96% | Intel / Market.us |

| Detection accuracy drop in real-world vs lab | 45-50% | DeepStrike |

| Deepfake detection market size (2026) | $15.7B | Deloitte |

| Organizations with no deepfake response plan | 80% | Keepnet Labs |

Detection Strengths

- AI tools: 96% accuracy (lab conditions)

- Multimodal analysis improves results

- Blood flow detection (Intel FakeCatcher)

- Detection market growing 42% annually

Detection Weaknesses

- Human detection: 0.1% accuracy

- 45-50% accuracy drop in real-world

- New models defeat older detectors

- 80% of orgs have no response plan

Deepfake Detection Toolkit

Select a detection platform to see its capabilities and limitations.

Nathan House's Analysis: Why the Detection Arms Race Favours Attackers

The mathematics favour generation over detection. Creating a deepfake costs pennies and takes minutes. Detecting one requires sophisticated AI models, multimodal analysis, and real-time processing. Intel's FakeCatcher achieves 96% accuracy in the lab -- but 45-50% accuracy drops in production mean roughly half of real-world deepfakes still get through. Every new generation model renders previous detectors partially obsolete. The detection market is growing at 42% CAGR ($15.7 billion by 2026), but this is a reactive investment chasing a threat that evolves faster.

👤 AI-Generated Content & Identity Fraud

85% of businesses are concerned about detecting synthetic identity fraud (Regula). Synthetic identities -- fabricated using a combination of real and AI-generated data -- are the fastest-growing category of financial fraud. Deepfakes now account for 6.5% of all fraud attempts globally (Sumsub), a 2,137% increase since 2022.

The detection-to-fraud ratio tells the story: deepfake attempts detected by automated systems quadrupled between 2023 and 2024, yet fraud losses continued to rise. This suggests detection is catching a larger proportion of attempts but the total volume is growing faster than defences can scale. KYC (Know Your Customer) processes are particularly vulnerable, as deepfake-generated identity documents and live video verification bypass traditional checks.

How AI Deepfakes Bypass Identity Verification

Traditional identity verification relies on three factors: something you have (ID document), something you know (personal details), and something you are (biometric check). AI deepfake technology can now defeat all three. Synthetic identity documents pass automated document verification. Large language models generate convincing personal knowledge answers. Deepfake video bypasses live facial recognition checks. Financial institutions report that deepfake-enabled synthetic identities are the fastest-growing fraud vector in account opening.

Synthetic Identity Fraud at Scale

Synthetic identity fraud combines real and fabricated data to create entirely new identities. AI deepfakes accelerate this by generating convincing photos, videos, and voice profiles for these synthetic personas. TransUnion reports US lender exposure to synthetic identity fraud at all-time highs. The combination of AI-generated visual identities with stolen personal data (Social Security numbers, addresses) creates synthetic persons that pass standard verification.

85% of businesses express concern about detecting synthetic identity fraud (Regula). This concern is justified: synthetic identities can maintain clean credit profiles for months or years before "busting out" with large losses. The AI deepfake component makes these identities visually convincing during in-person and video verification, adding a layer of sophistication that traditional fraud detection systems were not designed to catch.

| Finding | Value | Source |

|---|---|---|

| Businesses concerned about synthetic identity fraud | 85% | Regula |

| Synthetic identity fraud losses | $3.3B | TransUnion |

| Deepfakes as share of all fraud attempts | 6.5% | Sumsub |

| Deepfakes detected increase 2023-2024 | 4x | Sumsub |

| Deepfake fraud increase since 2022 | 2,137% | Sumsub |

Synthetic Identity Is the Quiet Epidemic

While the Arup-style video call scams grab headlines, synthetic identity fraud is the higher-volume threat. TransUnion reports synthetic identity fraud exposure at all-time highs. Combine that with Regula's finding that 85% of businesses are concerned about detection, and Sumsub's data showing deepfakes are now 6.5% of all fraud, and the picture is clear: deepfakes are not just a corporate espionage tool. They are industrialising identity fraud at scale.

🏦 Deepfake Impact by Industry

Financial Services

Financial services face 300x more attacks than other industries (KnowBe4 2025). Deepfakes compound this exposure. The sector's largest single incident targeted a finance team via multi-participant video call impersonation (see Section 6). CEO deepfake fraud targets 400 companies daily, with financial institutions as primary targets due to their authority-based approval workflows. US deepfake fraud losses reached $1.1 billion in 2025 (Keepnet), with financial services bearing the largest share.

Elections & Government

38 countries have faced deepfake incidents during elections since 2021 (Surfshark). In India's 2024 election, political parties spent an estimated $50 million on AI-generated content. In the US, AI-generated robocalls mimicking President Biden targeted up to 25,000 New Hampshire voters. However, research found that cheap fakes without AI were used seven times more often than AI-generated deepfakes in the 2024 US election cycle (Knight Columbia).

Media & Entertainment

Non-consensual deepfake pornography targeting celebrities surged 81% in Q1 2025 compared to all of 2024. Major deepfake pornography sites catalogue nearly 4,000 female celebrities. The entertainment industry faces unique risks as public figures provide abundant training data for AI models through interviews, performances, and social media content.

Healthcare & Critical Infrastructure

Healthcare organisations face compounding risks from AI deepfake attacks. Voice cloning can impersonate physicians to authorise procedures or access patient data. Deepfake video can bypass identity verification in telehealth. The healthcare sector already faces the highest breach costs at $11.2 million per incident (IBM 2025), and deepfakes add a new layer of social engineering risk to an already vulnerable sector.

Technology & Social Media

Social media platforms are both vectors and targets for deepfake content. Meta, Google, and X have implemented detection systems, but the volume of content makes comprehensive screening impractical. Deepfake fraud statistics show that social media provides the raw material for voice cloning (public video, audio clips) and the distribution channel for deepfake disinformation. The platforms face regulatory pressure from the EU AI Act and US state legislation to label and remove synthetic content.

Insurance & Risk Transfer

The cyber insurance industry is adapting to the deepfake threat. Some carriers have begun asking about deepfake controls in underwriting questionnaires. Callback verification, multi-person approval, and deepfake awareness training may affect premium pricing. Organisations should review whether their cyber insurance explicitly covers AI-enabled social engineering losses, as policy language written before the deepfake era may exclude synthetic media attacks. Losses comparable to the Arup incident -- equivalent to the annual premium of hundreds of SME policies -- highlight the exposure gap.

Regional Deepfake Threat Landscape

Deepfake threats vary by region. North America saw the highest deepfake fraud increase at 3,000% (Onfido). Asia-Pacific hosts the most election-related deepfake incidents, with India spending $50 million on AI-generated political content in 2024. Europe faces the most stringent regulatory environment with the EU AI Act. The Middle East and Africa report lower absolute volumes but faster growth rates as AI tools become more accessible. These regional variations inform where organisations should prioritise deepfake cybersecurity investment based on their geographic footprint.

| Finding | Value | Source |

|---|---|---|

| Financial services breach cost | $6.08M | IBM Cost of a Data Breach Report 2025 |

| Financial attack frequency vs others | 300x | KnowBe4 Financial Sector Threats Report 2025 |

| Countries facing election deepfakes | 38 | Surfshark |

| Companies targeted by CEO fraud daily | 400 | BrightSide AI |

| US deepfake fraud losses | $1.1B | Keepnet Labs |

| Global deepfake fraud losses Q1 2025 | $200M+ | DeepStrike |

Nathan House's Analysis: Financial Services Bear the Heaviest Burden

Financial services face a convergence of deepfake risks. They are targeted 300x more than other industries (KnowBe4), they handle high-value transactions that deepfake CEO fraud exploits, and their KYC processes are vulnerable to synthetic identities. The 400 companies targeted daily by CEO deepfake fraud are disproportionately financial institutions. Yet the election data provides a counterpoint: in mature democracies, deepfake disinformation had less impact than feared in 2024. The real threat is financial, not political.

🧠 Public Awareness & Preparedness

The World Economic Forum's Global Cybersecurity Outlook 2025 reveals a growing awareness of the deepfake threat, but preparedness is not keeping pace. 21% of cybersecurity managers say deepfakes are what they are least prepared for -- up from 3% in the previous year, a 7x increase. At the C-suite level, 28% of cyber leaders cite deepfakes as their primary preparedness gap, up from 6% (WEF 2025).

80% of companies have no established protocols for handling deepfake-based attacks (Keepnet). 25% of adults have already experienced an AI voice scam (McAfee). The 0.1% human detection rate (iProov) means awareness alone is insufficient -- organisations need technical controls, verification procedures, and incident response plans specifically designed for synthetic media attacks.

The Awareness vs Preparedness Gap

The WEF data reveals an important distinction between awareness and preparedness. More cybersecurity leaders are identifying deepfakes as a concern (managers went from 3% to 21%, C-suite from 6% to 28%). But knowing about a threat and being prepared for it are not the same thing. 80% of companies lack response plans. Most organisations have not run deepfake simulation exercises. Few have updated their financial controls to account for AI-impersonated executives.

Consumer awareness is also low relative to the threat. 25% of adults have encountered AI deepfake voice scams (McAfee), but far fewer recognise the technology behind the attack. Many victims report believing the voice was genuine even in hindsight. The "indistinguishable threshold" means that traditional advice -- "listen carefully for anomalies" -- is increasingly ineffective. Deepfake detection requires technical controls, not heightened attention.

| Finding | Value | Source |

|---|---|---|

| Managers least prepared for deepfakes (up from 3%) | 21% | WEF Global Cybersecurity Outlook 2025 |

| C-suite least prepared for deepfakes (up from 6%) | 28% | WEF Global Cybersecurity Outlook 2025 |

| Companies with no deepfake response plan | 80% | Keepnet Labs |

| People who can detect deepfakes | 0.1% | iProov Deepfake Blindspot Study 2025 |

| Adults who have experienced AI voice scam | 25% | McAfee |

The Preparedness Gap Is Widening

The WEF data reveals a paradox. Deepfake awareness is rising (managers citing it jumped 7x from 3% to 21%), but preparedness is lagging (80% have no response plan). Awareness without action is dangerous -- it creates a false sense of progress. The organisations that will survive deepfake attacks are not the ones that know about the threat. They are the ones with callback verification, multi-channel authentication, and staff trained to question any unusual financial request regardless of how convincing the caller appears.

⚖️ Deepfake Regulation & Legislation

47 US states have enacted deepfake legislation as of January 2026, with 169 total laws passed since 2022 (MultiState). 82% of all state deepfake laws were enacted in just the last two years, reflecting the rapid acceleration of legislative response. State legislatures introduced 146 new bills in 2025 alone.

At the federal level, the TAKE IT DOWN Act was signed into law in May 2025, requiring platforms to remove non-consensual intimate imagery within 48 hours. The DEFIANCE Act passed the US Senate unanimously in January 2026, establishing a federal right of action for victims of sexually explicit deepfakes.

The EU AI Act, effective August 2026, requires AI-generated content to be labelled in a machine-readable format. Penalties can reach up to 35 million euros or 7% of global annual turnover, whichever is higher. China introduced mandatory AI content labelling rules effective September 2025. 38 countries have experienced election-related deepfake incidents (Surfshark), driving regulation globally.

US Federal Legislation

The TAKE IT DOWN Act, signed into law in May 2025, creates federal requirements for online platforms to remove non-consensual intimate imagery -- including AI deepfake content -- within 48 hours of a valid notice. The DEFIANCE Act, passed unanimously by the US Senate in January 2026, establishes a federal right of action allowing victims of sexually explicit deepfakes to sue creators, distributors, and knowing hosts of such content.

International Frameworks

Beyond the US, regulatory responses to AI deepfake threats are accelerating globally. The EU AI Act represents the most comprehensive framework, requiring all AI-generated synthetic content to carry machine-readable labels. Denmark's proposed legislation would allow victims to request deepfake content removal and demand compensation. China's measures require traceability for all AI-generated media. These deepfake statistics for 2026 reflect a regulatory environment that is maturing rapidly, though enforcement mechanisms remain underdeveloped in most jurisdictions.

| Finding | Value | Source |

|---|---|---|

| US states with deepfake legislation | 47 | MultiState |

| Deepfake laws enacted since 2022 | 169 | MultiState |

| Maximum EU AI Act penalty | €35M or 7% | European Commission |

| Countries facing election deepfakes | 38 | Surfshark |

Regulation Is Moving Fast -- But Enforcement Lags Behind

169 laws in four years is rapid legislative action by any measure. But legislation without enforcement is paper protection. The EU AI Act penalties are significant (up to 7% of global turnover), but the Act does not fully take effect until August 2026. The TAKE IT DOWN Act requires removal within 48 hours, but detection and reporting remain the victim's burden. The gap between passing laws and enforcing them will determine whether regulation actually curbs deepfake harm.

Key Regulatory Dates for 2026

TAKE IT DOWN Act (US)

Platforms must remove non-consensual intimate imagery within 48 hours of valid notice.

China AI Labelling Rules

Mandatory labelling and traceability for all AI-generated synthetic media in China.

DEFIANCE Act (US)

Federal right of action for victims of non-consensual sexually explicit deepfakes. Passed Senate unanimously.

EU AI Act (Full Effect)

AI-generated content must be machine-readable labelled. Penalties up to 35M EUR or 7% of global turnover.

For organisations operating internationally, the EU AI Act's August 2026 deadline represents the most consequential regulatory milestone. Non-compliance penalties of up to 7% of global turnover make this more financially impactful than GDPR for many organisations. Compliance will require implementing AI content labelling, maintaining audit trails for AI-generated media, and ensuring disclosure of synthetic content at first interaction.

💹 Deepfake Market & Technology

Detection Market

- $15.7 billion by 2026 (Deloitte)

- Growing at 42% CAGR

- Multimodal analysis leading approach

- Intel FakeCatcher: 96% lab accuracy

Generation Market

- $850 million in 2025 (MarketsandMarkets)

- Projected $7.27 billion by 2031

- 42.8% CAGR

- Open-source tools proliferating

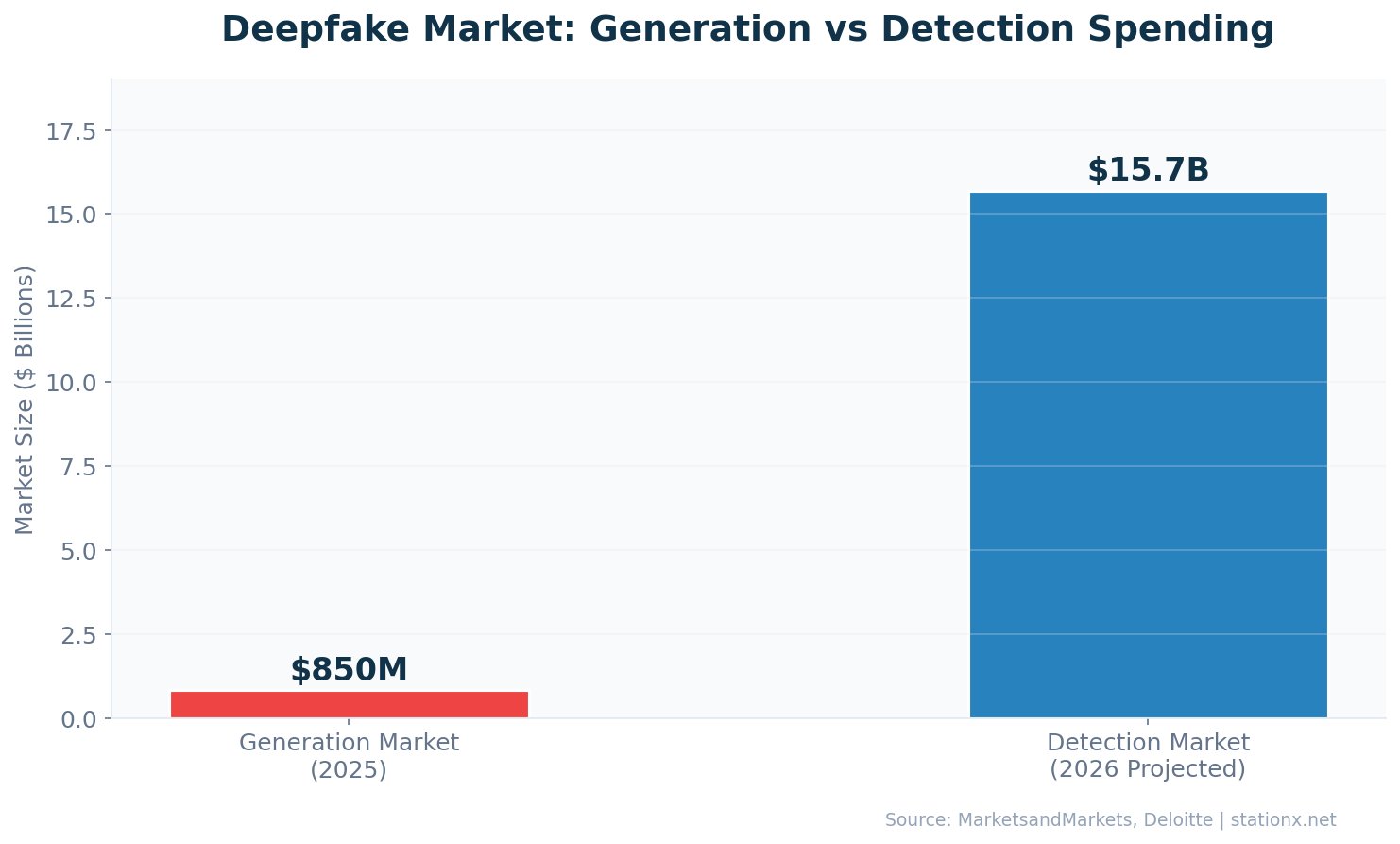

The deepfake detection market is projected to reach $15.7 billion by 2026, growing at approximately 42% annually (Deloitte). This dwarfs the deepfake generation market at $850 million in 2025 (MarketsandMarkets), reflecting the asymmetry of the problem: detection costs more than generation. The generation market is projected to reach $7.27 billion by 2031, growing at 42.8% CAGR.

The detection-to-generation market ratio of 18.5x ($15.7B vs $850M) illustrates the defender's dilemma. Organisations are spending 18 times more on detecting deepfakes than the market for creating them. This ratio will likely narrow as generation tools commoditise, but the investment asymmetry highlights how deepfakes -- like ransomware before them -- cost far more to defend against than to deploy.

Technology Trends Shaping Deepfake Detection

Multimodal detection represents the leading approach in the deepfake detection market. These systems analyse visual, auditory, and metadata signals simultaneously, catching inconsistencies that single-mode detectors miss. Intel's FakeCatcher uses photoplethysmography -- analysing subtle blood flow patterns in facial video -- to achieve 96% lab accuracy. Pindrop's voice liveness detection focuses on audio spectral signatures. The most effective enterprise deployments combine multiple detection modalities.

The generation side of the market is driven by both legitimate and malicious use cases. Legitimate applications include entertainment, accessibility, and corporate training. Malicious applications -- fraud, disinformation, non-consensual pornography -- drive the cybersecurity response. The $850 million generation market growing at 42.8% CAGR to $7.27 billion by 2031 (MarketsandMarkets) indicates sustained investment in deepfake creation capabilities.

Real-time deepfake generation is the frontier technology that most concerns cybersecurity professionals. The Arup scam used real-time video deepfakes of multiple participants on a live call. As real-time generation improves, the window for detection shrinks. Detection systems that can only analyse recorded content will become insufficient -- real-time, in-stream detection will be required for video conferencing and voice calls.

| Finding | Value | Source |

|---|---|---|

| Deepfake detection market (2026) | $15.7B | Deloitte |

| Deepfake AI generation market (2025) | $850M | MarketsandMarkets |

| Projected deepfake AI market (2031) | $7.27B | MarketsandMarkets |

| Intel FakeCatcher accuracy | 96% | Intel / Market.us |

| GenAI fraud CAGR | 32% | Deloitte |

Nathan House's Analysis: The 18.5x Cost Asymmetry

The detection market ($15.7B) is 18.5 times larger than the generation market ($850M). This is the clearest signal that deepfakes follow the classic attacker-defender asymmetry in cybersecurity. Creating a convincing deepfake costs pennies using open-source tools. Detecting one requires enterprise-grade AI infrastructure. Both markets are growing at ~42% CAGR, which means the gap is not closing. GenAI fraud is compounding at 32% annually toward $40 billion by 2027 (Deloitte). Organisations that delay investment in detection and verification processes are making a bet that the threat will plateau -- and every data point says it will not.

🎯 Key Takeaways

- Volume is exploding: 8 million deepfakes online, growing at 900% annually. This is no longer a novelty -- it is an industrial-scale threat.

- Financial impact is proven: the largest single deepfake scam exceeds most annual ransomware hauls, US losses hit $1.1 billion in 2025, and Deloitte projects $40 billion by 2027. Deepfakes are now a billion-dollar fraud vector.

- Voice cloning is the most dangerous vector: 3 seconds of audio, 85% match accuracy, 1,633% vishing surge, 77% victim conversion rate. Every executive with a recorded voice is a potential target.

- Detection is losing the arms race: 0.1% human accuracy, 45-50% real-world accuracy drop for AI tools, 80% of companies have no response plan.

- Regulation is accelerating but enforcement lags: 47 US states with laws, EU AI Act penalties up to 7% of turnover, but detection and reporting remain the victim's burden.

- The cost asymmetry favours attackers: Detection market ($15.7B) is 18.5x the generation market ($850M). Creating deepfakes is cheap; defending against them is expensive.

The data points converge on one conclusion: deepfakes have crossed from a theoretical risk to a measured, quantified, and rapidly growing threat. The organisations that fare best will be those that invest in verification processes (callback procedures, multi-channel authentication, code words), detection technology (multimodal AI systems), and staff training (deepfake awareness drills) before an Arup-scale incident happens to them.

Recommended Actions Based on the Data

Immediate (This Quarter)

- Implement callback verification for all financial requests over $10,000

- Establish code words for executive-level approval calls

- Audit publicly available executive audio and video

- Brief finance teams on the Arup incident pattern

- Create a deepfake incident response plan

Strategic (This Year)

- Evaluate deepfake detection tools for video conferencing

- Deploy voice liveness detection on phone systems

- Add deepfake scenarios to security awareness training

- Review insurance coverage for AI-enabled fraud losses

- Monitor regulatory requirements (EU AI Act compliance)

These deepfake statistics for 2026 show that the window for proactive defence is narrowing. At 900% annual content growth and a 32% CAGR in fraud losses, the cost of inaction compounds faster than most security budgets can accommodate. The organisations that act on this data now will be positioned to resist deepfake attacks. Those that wait for their own Arup-scale incident will pay a tuition fee that dwarfs most annual security budgets.

Executive Briefing: The Deepfake Threat in 60 Seconds

For board presentations and executive briefings on AI deepfake threats, the six numbers above tell the complete story. The largest single scam proves the damage ceiling. The 3-second clone time proves the accessibility. The 0.1% detection rate proves human defences are insufficient. The 8 million volume proves the scale. The 80% without plans proves the response gap. The $40 billion projection proves the trend. Every deepfake cybersecurity conversation should start with these numbers.

How to Present These Deepfake Statistics to Leadership

When presenting deepfake statistics to non-technical executives, lead with the financial impact. Arup-scale losses and the 187x cost escalation over traditional BEC resonate with finance-focused leadership. Follow with the 80% of companies lacking response plans to create urgency. Close with specific verification controls -- callback procedures, code words, multi-person approval -- that are implementable within weeks, not months.

Avoid leading with technology. Deepfake detection technology is important but secondary to process controls. The Arup scam was defeated not by better deepfake detection, but by basic verification procedures that were not in place. Start with process, then layer technology. This framing helps executives understand that deepfake defence does not require massive capital expenditure -- it requires procedural discipline.

❓ Frequently Asked Questions

How common are deepfakes?

An estimated 8 million deepfakes circulate online as of 2025, up from 500,000 in 2023. Deepfake content is growing at approximately 900% annually. Deepfakes now account for 6.5% of all fraud attempts globally (Sumsub).

How much does deepfake fraud cost?

The largest single deepfake scam cost Arup $25.6 million (CrowdStrike). US deepfake fraud losses reached $1.1 billion in 2025 (Keepnet). Deloitte projects GenAI-enabled fraud losses will reach $40 billion in the US by 2027.

Can people detect deepfakes?

Only 0.1% of people can reliably identify AI-generated deepfakes (iProov 2025). For video deepfakes specifically, the human detection rate is 24.5% (DeepStrike). AI detection tools achieve up to 96% accuracy in lab conditions but drop 45-50% in real-world use.

How quickly can a voice be cloned?

As little as 3 seconds of audio is needed to create a voice clone with 85% accuracy (McAfee). 25% of adults have already experienced an AI voice scam. Vishing attacks using cloned voices surged 1,633% in Q1 2025.

How many countries have deepfake laws?

47 US states have enacted deepfake legislation with 169 total laws since 2022 (MultiState). The EU AI Act requires AI content labelling effective August 2026 with penalties up to 35 million euros or 7% of global turnover. 38 countries have experienced election-related deepfakes (Surfshark).

What is the deepfake detection market worth?

The deepfake detection market is projected to reach $15.7 billion by 2026, growing at approximately 42% annually (Deloitte). The deepfake AI generation market is $850 million in 2025, projected to reach $7.27 billion by 2031 (MarketsandMarkets). Detection spending is 18.5x the generation market.

How do deepfake attacks target businesses?

Deepfake attacks on businesses primarily use CEO impersonation via video calls and voice cloning. The largest single incident -- the Arup case -- used AI-generated clones of multiple colleagues on a live video call to authorise fraudulent transfers. CEO deepfake fraud targets approximately 400 companies per day (BrightSide AI). 80% of companies have no deepfake response plan (Keepnet).

What percentage of deepfakes are pornographic?

98% of deepfake videos online are non-consensual pornography, with 99-100% of victims being women (Sensity AI). The volume of deepfake pornographic content produced in 2023 was 464% higher than 2022. Financial fraud deepfakes, while a smaller share by volume, are growing fastest.

Are deepfakes used in elections?

38 countries have faced deepfake incidents during elections since 2021, affecting 3.8 billion people (Surfshark). Notable incidents include AI-generated robocalls mimicking President Biden in the 2024 New Hampshire primary. However, research found that non-AI cheap fakes were used seven times more often than AI deepfakes in the 2024 US election cycle.

About This Data

This article draws from 1472 statistics aggregated from 50+ authoritative sources including IBM Cost of a Data Breach, Verizon DBIR, CrowdStrike Global Threat Report, WEF Global Cybersecurity Outlook, FBI IC3, ISC2 Cybersecurity Workforce Study, Sophos, Gartner, Mandiant M-Trends, and Ponemon Institute reports.

Derived statistics (marked "Nathan House's Analysis") are computed by cross-referencing data from multiple sources — for example, comparing breach costs across industries using IBM data, or validating ransomware trends across Verizon, Sophos, and HIPAA Journal findings.

All statistics include inline source citations with links to primary sources. Data spans 2023-2026, with preference given to the most recent available figures. Last updated: May 2026.

How to Use This Data

Security professionals can use these deepfake statistics to build business cases for deepfake detection investment, justify verification process improvements, and benchmark their organisation's preparedness against industry data. Use the derived statistics and contrast boxes to highlight cost differentials that resonate with executive decision-makers.

This page is updated monthly as new reports are published. Bookmark it and return for the latest data. If you spot an outdated statistic or want to suggest a source, contact us.

Key Sources for This Article

Fraud & Financial Impact

- CrowdStrike Global Threat Report 2025

- Deloitte Center for Financial Services

- Onfido Identity Fraud Report 2024

- Sumsub Identity Fraud Report

- FBI IC3 Annual Report 2024

Voice Cloning & Detection

- McAfee AI Voice Scam Research 2024

- Pindrop Voice Intelligence Report 2025

- iProov Deepfake Blindspot Study 2025

- Intel FakeCatcher Research

- DeepStrike Deepfake Statistics 2025

Market & Industry

- MarketsandMarkets Deepfake AI Report

- Keepnet Labs Deepfake Trends 2026

- KnowBe4 Financial Sector Report 2025

- Regula Deepfake Trends 2024

Regulation & Awareness

- WEF Global Cybersecurity Outlook 2025

- MultiState Legislative Tracker 2026

- Surfshark Election Deepfake Research

- European Commission AI Act

About This Deepfake Statistics Collection

This article aggregates deepfake statistics from 40+ statistics across our research database, drawn from 30+ authoritative sources. All statistics include inline source citations with links to primary reports.

Derived statistics (marked "Nathan House's Analysis") are computed by cross-referencing data from multiple independent sources. For example, the 187x cost escalation compares CrowdStrike's Arup incident figure with FBI IC3's average BEC losses. The 18.5x market ratio compares Deloitte's detection market with MarketsandMarkets' generation market data.

These derived insights are original to StationX and represent the kind of cross-referencing analysis that distinguishes research from aggregation. No single source contains all these data points -- combining them reveals patterns that individual reports cannot show.

About the Author

Nathan House, StationX

Nathan House is a cybersecurity expert with 30 years of hands-on experience. He holds OSCP, CISSP, and CEH certifications, has secured £71 billion in UK mobile banking transactions, and has worked with clients including Microsoft, Cisco, BP, Vodafone, and VISA. Named Cyber Security Educator of the Year 2020 and a UK Top 25 Security Influencer 2025, Nathan is a featured expert on CNN, Fox News, and NBC. He founded StationX, which has trained over 500,000 students in cybersecurity.