Data Privacy Statistics [2026]: 51+ Laws, Fines & Trends

172 countries now enforce data privacy laws (Greenleaf 2025). GDPR regulators have issued €7.1 billion in fines since 2018, with Meta's €1.2 billion penalty leading the pack (DLA Piper 2026). In the US, 20 states have passed comprehensive privacy legislation with no federal law in sight.

You'll find 51+ data privacy statistics across 9 categories below — from global legislation and GDPR enforcement to consumer attitudes and AI governance — sourced from Cisco, IBM, DLA Piper, IAPP, and 15+ authoritative reports. Each section includes original analysis cross-referencing multiple sources to surface insights you won't find elsewhere.

Key Takeaways

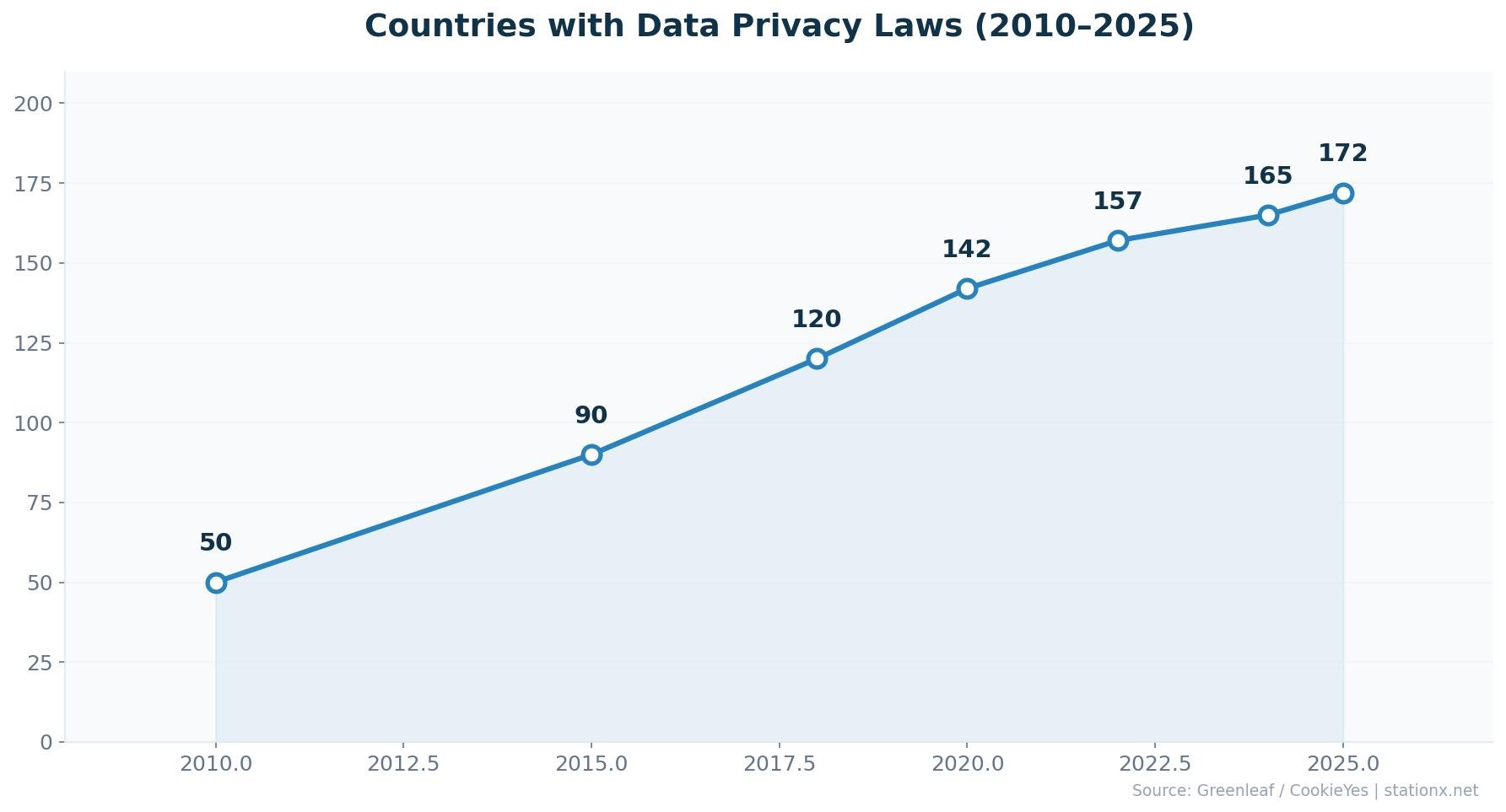

- 172 countries now have data protection laws — 79% of all nations worldwide (Greenleaf 2025)

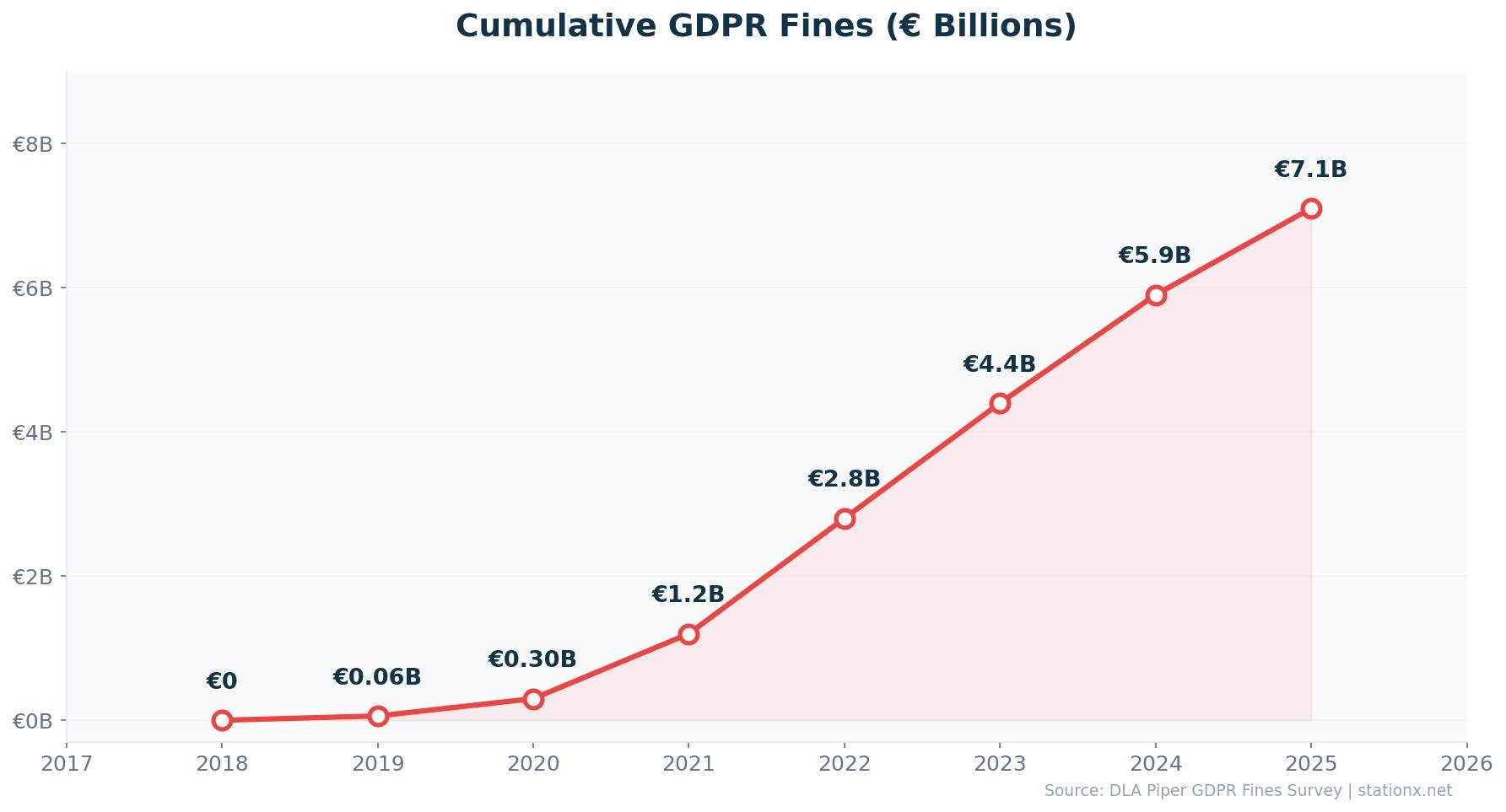

- €7.1B in GDPR fines issued since May 2018, up 21% from the prior year (DLA Piper 2026)

- 400+ breach notifications daily — a GDPR record, up 22% year-over-year (DLA Piper 2026)

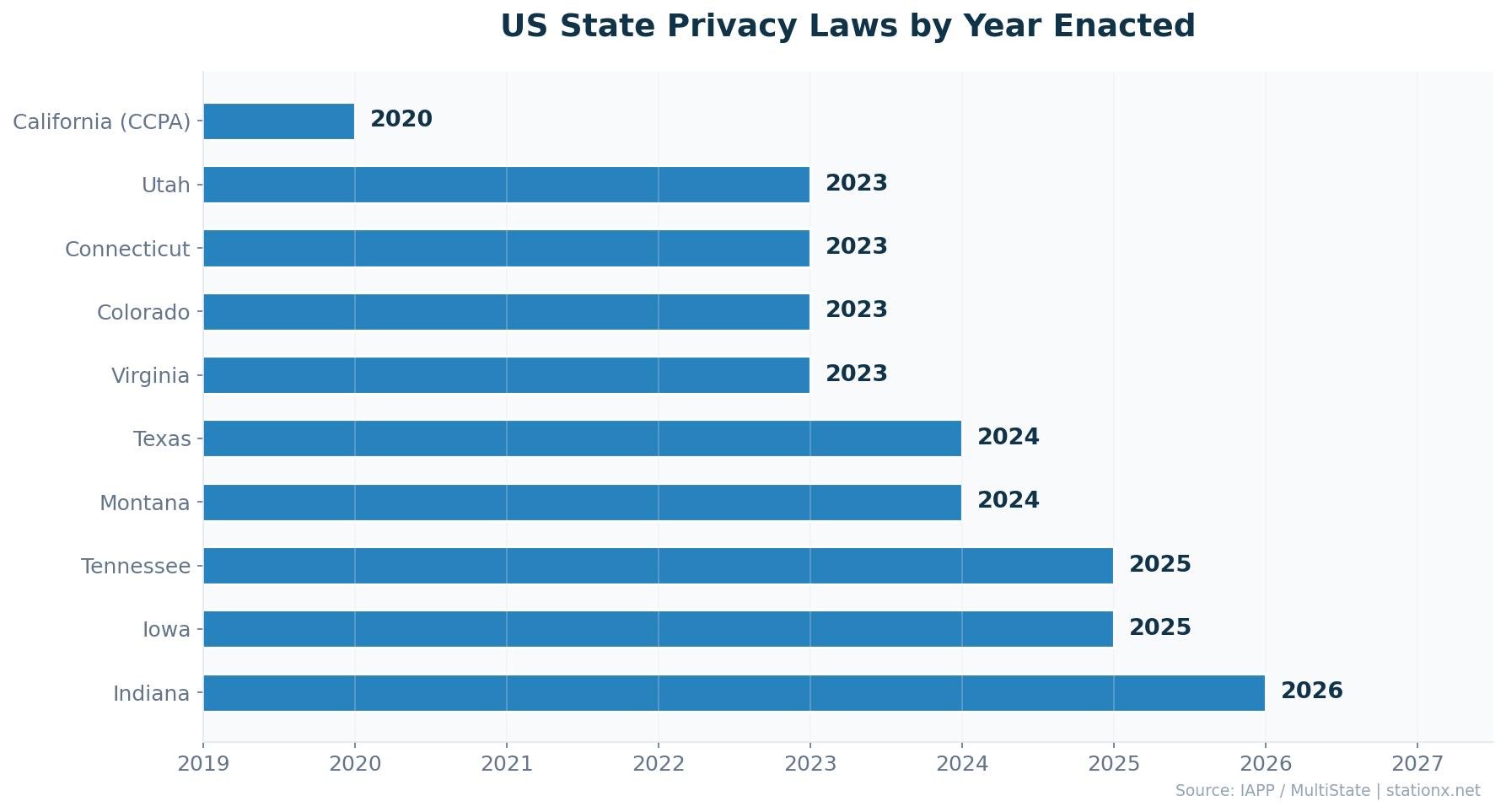

- 20 US states have comprehensive privacy laws, with Indiana, Kentucky, and Rhode Island taking effect Jan 2026 (IAPP)

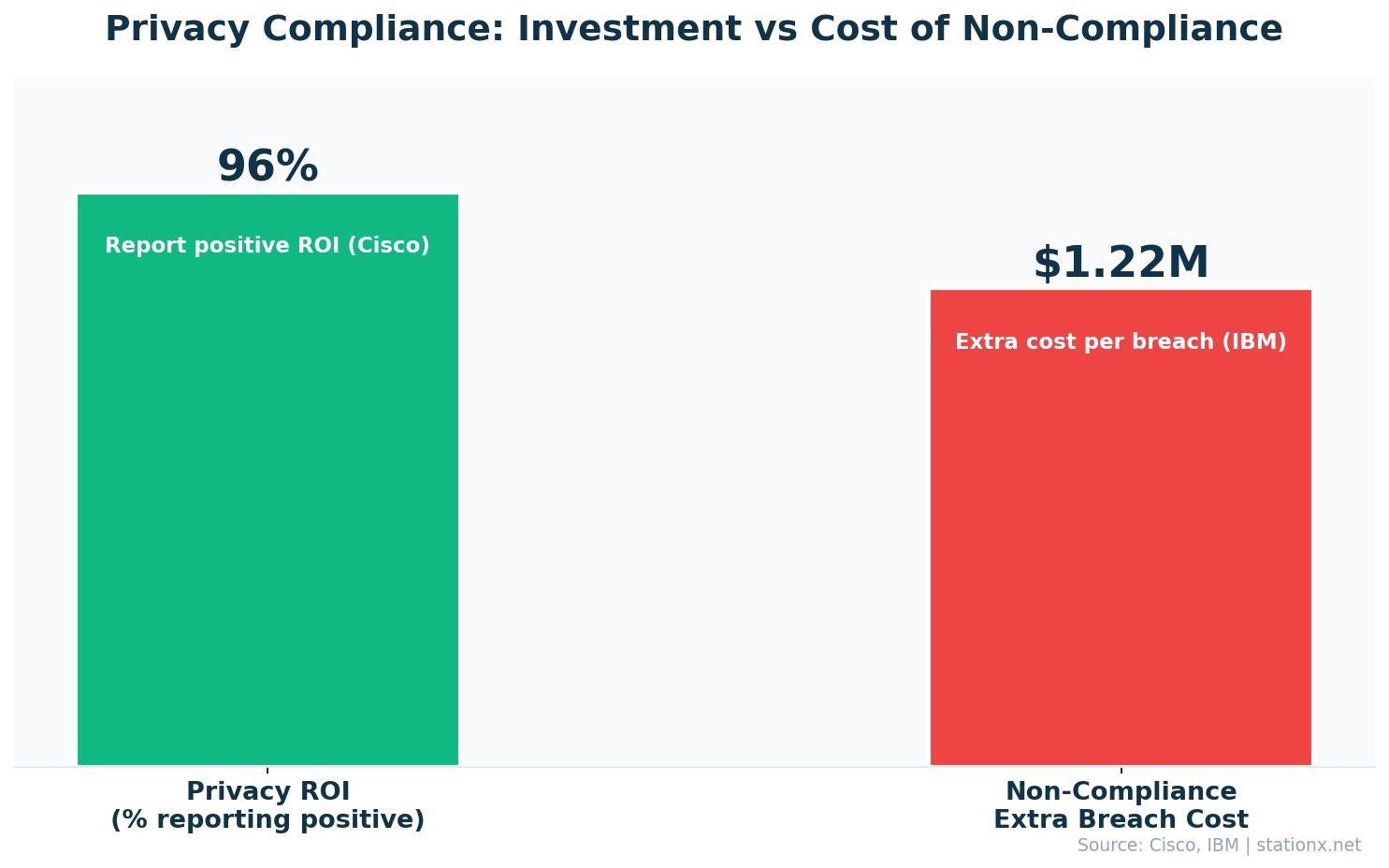

- 96% of organizations say privacy investment returns exceed costs, with a median 1.6x ROI (Cisco 2025)

- 82% of internet users are concerned about how companies collect and use personal data (CookieYes 2025)

- $4.63M average breach cost when shadow AI is involved — $670K above the standard average (IBM 2025)

- 38% of organizations now spend $5M+ annually on privacy, up from 14% in 2024 (Cisco 2026)

Last updated: May 2026

📊 Data Privacy Statistics: The Headlines

These are the data privacy statistics that define the regulatory landscape in 2026. From the global spread of privacy legislation to the financial consequences of non-compliance, the numbers tell a clear story: privacy is no longer optional, it's enforced.

| Finding | Value | Source |

|---|---|---|

| Countries with data privacy laws | 172 | Graham Greenleaf, UNSW Law Research Paper 2025 |

| Cumulative GDPR fines since 2018 | €7.1B | DLA Piper GDPR Fines Survey 2026 |

| Organizations say privacy ROI exceeds costs | 96% | Cisco 2025 Data Privacy Benchmark Study |

| US states with comprehensive privacy laws | 20 | IAPP / MultiState |

| Internet users concerned about data collection | 82% | CookieYes / Global Consumer Privacy Survey |

| Average breach cost from compliance failures | $1.22M | IBM Cost of a Data Breach Report 2025 |

| Total GDPR fines issued since May 2018 | 2,679 | GDPR Enforcement Tracker |

| Organizations expanding privacy programs for AI | 90% | Cisco 2026 Data and Privacy Benchmark Study |

Nathan House's Analysis: Privacy Law Acceleration

The pace of privacy legislation has been remarkable. In 2015, roughly 100 countries had data protection laws. By 2026, that number has reached 172 — a 72% increase in a decade. The focus is now shifting from enacting new laws to strengthening existing ones. Cross-referencing Greenleaf's global count with the WEF's finding that 76% of CISOs cite regulatory fragmentation as their top challenge, the problem isn't the absence of privacy laws — it's the complexity of complying with dozens of overlapping regimes.

🌍 How Many Countries Have Data Privacy Laws?

172 countries had enacted data protection or privacy legislation by 2025, covering 79% of the world's nations (Greenleaf 2025). The pace of adoption accelerated after GDPR's introduction in 2018, with 12 new countries passing laws in 2023-24 alone.

| Finding | Value | Source |

|---|---|---|

| Countries with data protection/privacy laws | 172 | Graham Greenleaf, UNSW Law Research Paper 2025 |

| Percentage of countries with privacy legislation | 79% | CookieYes / UN Trade and Development |

| CISOs citing regulatory fragmentation as top challenge | 76% | WEF Global Cybersecurity Outlook 2025 |

Key global privacy frameworks beyond GDPR include Brazil's LGPD (2020), China's PIPL (2021), South Africa's POPIA (2021), India's DPDPA (2023), and Saudi Arabia's PDPL (2023). Each follows GDPR principles — consent, purpose limitation, data minimisation — but with region-specific enforcement and scope.

Nathan House's Analysis: The Regulatory Fragmentation Problem

76% of CISOs say regulatory fragmentation is their top challenge (WEF 2025). This makes sense: a multinational company operating in 30 countries may need to comply with 30 different privacy regimes. The data shows that compliance complexity — not the existence of privacy laws — is what drives up costs. IBM's finding that compliance failures add $1.22M to average breach costs underscores the financial penalty for getting this wrong.

Strictest Enforcement

- EU/EEA — GDPR, €7.1B in fines

- Ireland DPC — €3.5B alone (Big Tech HQ)

- France CNIL — €325M fine on Google

- Netherlands — 39,773 breach notifications/year

Growing Frameworks

- Brazil LGPD — Active since 2020

- India DPDPA — Enacted August 2023

- China PIPL — Strictest exit controls

- Saudi Arabia PDPL — 2023, GCC leader

Regional Privacy Law Comparison

Not all privacy laws are created equal. GDPR remains the gold standard with its extraterritorial reach, heavy fines (up to 4% of global turnover), and strong individual rights. China's PIPL (2021) imposes the strictest data exit controls, requiring security assessments for cross-border transfers. India's DPDPA (2023) is the newest major framework, covering 1.4 billion citizens but with narrower scope than GDPR — no right to data portability and limited restrictions on government access.

Brazil's LGPD closely mirrors GDPR's structure, with a national data protection authority (ANPD) that has been increasingly active since 2022. South Africa's POPIA stands out as the strongest framework on the African continent, with enforcement actions beginning in 2023. The pattern is clear: GDPR's influence extends well beyond Europe, with most new privacy laws borrowing heavily from its principles.

🛡️ GDPR Statistics

€7.1 billion in cumulative GDPR fines as of January 2026 — up 21% from €5.88 billion just 12 months earlier (DLA Piper). For the first time since GDPR took effect in May 2018, data protection authorities recorded an average of more than 400 personal data breach notifications per day, a 22% year-over-year increase.

| Finding | Value | Source |

|---|---|---|

| Cumulative GDPR fines (Jan 2026) | €7.1B | DLA Piper GDPR Fines Survey 2026 |

| Cumulative GDPR fines (Jan 2025) | €5.88B | DLA Piper GDPR Fines and Data Breach Survey 2025 |

| Total GDPR fines issued | 2,679 | GDPR Enforcement Tracker |

| Average daily breach notifications | 400+ | DLA Piper GDPR Fines and Data Breach Survey 2026 |

| YoY increase in daily breach notifications | 22% | DLA Piper GDPR Fines Survey 2026 |

| Ireland DPC cumulative fines | €3.5B | DLA Piper GDPR Fines Survey 2025 |

| GDPR fines issued in 2023 | €1.6B | Statista |

| GDPR fines issued in 2023 (Cobalt) | €1.6B | Statista / Cobalt |

Nathan House's Analysis: Ireland's Outsized Enforcement Role

Ireland's Data Protection Commission has issued €3.5 billion in GDPR fines — 4 times more than the second-placed Luxembourg. This isn't because Ireland has worse privacy practices; it's because Meta, Google, TikTok, LinkedIn, and Apple all have their European headquarters there. Cross-referencing DLA Piper data, Ireland accounts for roughly 49% of all GDPR fines by value, making one regulator responsible for nearly half of the EU's entire enforcement output.

GDPR Enforcement by Country

Spain leads all EU/EEA countries in the number of enforcement actions with 1,033 fines totalling €123 million. Italy follows with 467 fines worth €277 million. But in terms of total fine value, Ireland dominates due to its jurisdiction over Big Tech's European operations. The Netherlands leads breach notifications with 39,773 per year, followed by Germany (34,467) and Poland (19,065).

The most common GDPR violations triggering fines are insufficient technical and organisational security measures (86 fines), non-compliance with general data processing principles (74 fines), and insufficient legal basis for data processing (73 fines). This tells organisations exactly where to focus their compliance efforts: secure your systems, define clear processing purposes, and document your legal basis.

GDPR Breach Notification Milestone

For the first time since GDPR launched in 2018, daily breach notifications exceeded 400 per day. That works out to more than 146,000 breach reports annually across the EU/EEA. The Netherlands alone reported nearly 40,000 notifications. This surge reflects both increased breach activity and stronger reporting compliance, making it harder for organisations to quietly handle incidents.

💰 Data Privacy Fines: Largest GDPR Penalties Ever

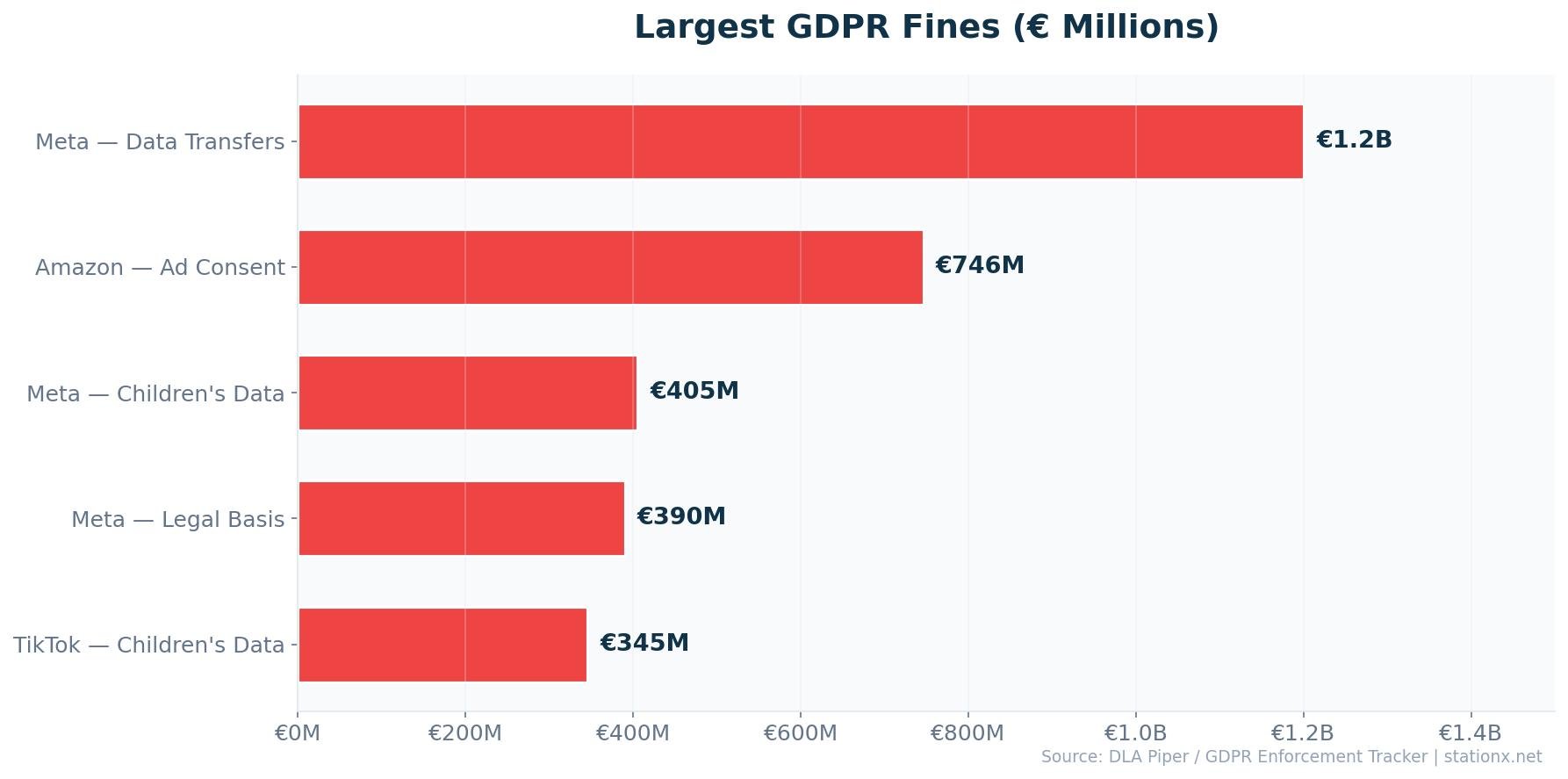

The top three GDPR fines alone total €2.48 billion. Meta holds both first and fourth place, while TikTok has received two separate penalties totalling €875 million. Every company in the top 10 is a technology platform, and every fine relates to cross-border data transfers or inadequate consent mechanisms.

| Finding | Value | Source |

|---|---|---|

| Meta — EU-US data transfers (2023) | €1.2B | Ireland Data Protection Commission |

| Amazon — Luxembourg (2021) | €746M | CNPD Luxembourg |

| TikTok — China data transfers (2025) | €530M | Ireland Data Protection Commission |

| Meta/Instagram — children's data (2023) | €405M | Ireland Data Protection Commission |

| TikTok — children's data (2023) | €345M | Ireland Data Protection Commission |

| LinkedIn — advertising data (2024) | €310M | Ireland Data Protection Commission |

| Uber — EU-US driver data transfers (2024) | €290M | Dutch Data Protection Authority |

Breaking Down the Top Fines

Meta — €1.2 billion (May 2023): Ireland's DPC fined Meta for continuing to transfer EU user data to the US via Standard Contractual Clauses after the Schrems II ruling invalidated the Privacy Shield framework. Meta was ordered to suspend transatlantic data flows within 6 months. This remains the largest GDPR fine ever issued.

Amazon — €746 million (July 2021): Luxembourg's CNPD fined Amazon for processing personal data for targeted advertising without proper consent. The fine was based on Amazon's entire European revenue base, signalling that regulators would use the 4% of turnover ceiling aggressively.

TikTok — €530 million (May 2025): Ireland's DPC fined TikTok for transferring European user data to China without adequate data protection safeguards. The investigation also found inadequate transparency about how data was processed in China. This was the most recent blockbuster GDPR fine and sent a strong signal about EU-China data flows.

Meta/Instagram — €405 million (September 2023): The DPC fined Meta for exposing children's email addresses and phone numbers on Instagram, and for setting default public visibility on teen accounts. This case established that children's privacy requires a higher standard of protection.

The Cross-Border Data Transfer Pattern

Six of the seven largest GDPR fines relate to cross-border data transfers or consent violations. The pattern is clear: moving European user data to the US or China without adequate safeguards is the highest-risk activity under GDPR. Meta's record €1.2B fine (EU-US transfers), TikTok's €530M fine (EU-China transfers), and Uber's €290M fine (EU-US driver data) all share this root cause.

The Children's Data Pattern

Three of the top ten GDPR fines specifically relate to children's data: Meta/Instagram's €405M, TikTok's €345M, and TikTok's €530M (which included children's data concerns alongside cross-border transfers). Combined, children's data violations account for over €1.28 billion in GDPR fines. Regulators have signalled that children's privacy is an enforcement priority, and the trend is accelerating with California's Age-Appropriate Design Code Act and the UK's Children's Code already in force.

Fine Trends: Beyond Big Tech

While headline fines target technology companies, GDPR enforcement is expanding into other sectors. Recent cases show regulators targeting SMEs, retailers, energy companies, and employers. Spain, which leads all countries with 1,033 enforcement actions, issues the majority of its fines against mid-market companies rather than tech giants. The message: GDPR compliance isn't just a Big Tech problem.

Top Fine Categories

- Cross-border data transfers — €2B+

- Children's data processing — €1.28B+

- Consent violations — €750M+

- Advertising data misuse — €310M+

Enforcement Leaders

- Spain — 1,033 fines (most actions)

- Italy — 467 fines (high value)

- Ireland — €3.5B (highest value)

- France — Google €325M fine

🇺🇸 US Data Privacy Laws

20 US states now have comprehensive data privacy laws, with Indiana, Kentucky, and Rhode Island taking effect on 1 January 2026 (IAPP/MultiState). There is still no federal privacy law. California's CCPA/CPRA remains the strictest, with the CPPA issuing a $1.35 million fine against Tractor Supply Company in 2025.

| Finding | Value | Source |

|---|---|---|

| States with comprehensive privacy laws | 20 | IAPP / MultiState |

| Largest CCPA/CPRA fine to date | $1.35M | California Privacy Protection Agency |

| CCPA max penalty per intentional violation | $7,988 | California Privacy Protection Agency |

The 20 states are: California, Colorado, Connecticut, Delaware, Florida, Indiana, Iowa, Kentucky, Maryland, Minnesota, Montana, Nebraska, New Hampshire, New Jersey, Oregon, Rhode Island, Tennessee, Texas, Utah, and Virginia. California leads enforcement with the CPPA actively investigating hundreds of cases.

Nathan House's Analysis: The Federal Vacuum Creates Compliance Chaos

With 20 state-level privacy laws and no federal standard, US companies operating nationally face a patchwork of requirements. Each state defines "personal information," consumer rights, and enforcement differently. Cross-referencing this with IBM's finding that compliance failures add $1.22M to average breach costs, the absence of a unified federal law isn't just a political issue — it's a direct financial risk for every US business.

CCPA/CPRA Enforcement

California's Privacy Protection Agency (CPPA) has been the most active US enforcement body. The $1.35 million fine against Tractor Supply Company in 2025 — for a non-functioning "Do Not Sell" mechanism — sent a clear signal. The CPPA has hundreds of ongoing investigations, and from January 2026, new regulations require mandatory cybersecurity audits and risk assessments for automated decision-making technology.

CCPA penalties increased in 2025: civil penalties now range from $2,663 to $7,988 per violation, with higher amounts for violations involving minors under 16. At $7,988 per intentional violation, a company with 100,000 affected consumers could face theoretical exposure of nearly $800 million — putting CCPA fine potential in GDPR territory for large-scale violations.

Global Privacy Law Timeline

Select a region to see when key privacy laws were enacted.

What US State Privacy Laws Cover

While all 20 US state privacy laws share common principles — consumer rights to access, delete, and opt out of data sales — they differ in key areas. California's CCPA/CPRA is the strictest, with a dedicated enforcement agency (CPPA) and penalties up to $7,988 per violation. Virginia's VCDPA was the second state law (2023) but has no private right of action. Colorado was the first to require a universal opt-out mechanism.

From January 2026, California's new CPPA regulations require mandatory cybersecurity audits for certain business categories and risk assessments for automated decision-making technology (ADMT). This is a significant expansion: for the first time, a US state privacy law directly regulates algorithmic decision-making, mirroring the EU AI Act's approach to high-risk AI systems.

Strongest US State Laws

- California — Dedicated CPPA, $7,988/violation

- Colorado — Universal opt-out required

- Connecticut — No cure period for violations

- Maryland — Strongest employee data protections

Key Consumer Rights (All States)

- Right to access personal data

- Right to delete personal data

- Right to opt out of data sales

- Right to correct inaccurate data

The states without privacy regulation statistics are notable too. The 30 states without comprehensive privacy laws include large-population states like New York, Illinois, and Pennsylvania. Several of these states have proposed bills but failed to pass them, often due to industry lobbying. Illinois does have BIPA (Biometric Information Privacy Act), which has generated significant litigation but is sector-specific rather than comprehensive.

👤 Consumer Privacy Attitudes

82% of internet users worldwide are concerned about how companies collect and use their personal data (CookieYes 2025). 75% say they won't purchase from companies they don't trust with their information. The gap between concern and action is closing — 48% have already stopped buying from a company specifically because of privacy concerns.

| Finding | Value | Source |

|---|---|---|

| Internet users concerned about data collection | 82% | CookieYes / Global Consumer Privacy Survey |

| Won't buy from companies they distrust with data | 75% | CookieYes / Global Consumer Privacy Survey |

| Stopped buying over privacy concerns | 48% | CookieYes / Global Consumer Privacy Survey |

| Want control over what data is collected | 84% | IAPP / Consumer Privacy Survey |

| View AI as a significant privacy threat | 57% | IAPP / Consumer Privacy Survey |

| Deleted an app over privacy (past 12 months) | 85% | IAPP / Consumer Privacy Survey |

Consumer privacy attitudes. Sources: CookieYes, IAPP 2025

The Privacy-Trust Spectrum

Consumer privacy statistics reveal a spectrum from passive concern to active action. At the awareness level, 82% express concern about data collection — this is nearly universal. At the consideration level, 75% say they won't buy from distrusted companies — privacy enters the purchase decision. At the action level, 48% have already stopped buying from a company over privacy — trust failures have concrete revenue consequences.

The data also shows that younger consumers are more likely to take privacy action. 84% want control over what data is collected, which aligns with the success of Apple's App Tracking Transparency (ATT) framework, which saw 96% of US users opt out of tracking when given the choice. The trend is clear: privacy is moving from a nice-to-have to a purchase criterion.

Nathan House's Analysis: The Trust-Revenue Connection

The data draws a direct line from privacy practices to revenue. 82% of consumers are concerned, 75% won't buy from distrusted companies, and 48% have already walked away from a purchase. Cross-referencing with Cisco's finding that 96% of organisations see positive privacy ROI, the business case isn't theoretical — privacy investment directly protects revenue. The 34-percentage-point gap between concern (82%) and action (48%) represents the companies currently at risk of losing customers as privacy awareness keeps rising.

AI compounds these concerns. 57% of consumers now view artificial intelligence as a significant privacy threat (IAPP 2025). As AI tools become embedded in customer-facing services, the organisations that can demonstrate transparent, privacy-respecting AI use will have a competitive advantage over those that can't.

Consumer Actions Speak Louder Than Surveys

The most striking data point isn't the concern percentage — it's the action percentage. Over the past 12 months, 85% of consumers deleted a phone app for privacy reasons. 82% opted out of sharing personal data. 78% avoided a website entirely. 67% decided against making an online purchase. These aren't hypothetical preferences; they're measurable revenue impacts.

84% of consumers say they want control over what information companies collect about them. The companies responding to this demand — with clear consent mechanisms, transparent data practices, and genuine opt-out options — are the ones retaining customers. The companies ignoring it are losing them.

48% have taken direct purchasing action vs 82% who express concern. The gap is closing.

Social Media Companies Face the Biggest Trust Deficit

Consumers identify social media companies (53%) as their top privacy concern, followed by governments (46%) and search engines (43%). This maps directly to the GDPR enforcement data: Meta has received the two largest GDPR fines ever (€1.2B and €405M), TikTok has received two fines totalling €875M, and LinkedIn was fined €310M. The companies consumers trust least are exactly the ones regulators are fining most.

💵 Privacy Compliance Costs & ROI

96% of organisations say privacy investment returns exceed costs, with a median 1.6x ROI (Cisco 2025). Average annual privacy spending sits at $2.7 million, but that figure is climbing fast: 38% of organisations now spend $5 million or more on privacy, up from just 14% in 2024 — a 2.7x increase (Cisco 2026).

| Finding | Value | Source |

|---|---|---|

| Say privacy investment returns exceed costs | 96% | Cisco 2025 Data Privacy Benchmark Study |

| Median ROI on privacy investment | 1.6x | Cisco 2025 Data Privacy Benchmark Study |

| Average annual privacy spending | $2.7M | Cisco 2025 Data Privacy Benchmark Study |

| Organizations spending $5M+ on privacy | 38% | Cisco 2026 Data and Privacy Benchmark Study |

| Average cost increase from compliance failure | $1.22M | IBM Cost of a Data Breach Report 2025 |

| Savings from incident response planning | $2.66M | IBM Cost of a Data Breach Report 2025 |

| Average breach cost with DevSecOps | $3.89M | IBM Cost of a Data Breach Report 2025 |

| Breached organizations that paid regulatory fines | 32% | IBM Cost of a Data Breach Report 2025 |

| Fines exceeding $250K (of those fined) | 25% | IBM Cost of a Data Breach Report 2025 |

Privacy Investment ROI Estimator

Estimate your privacy programme ROI based on Cisco's median 1.6x return and IBM's compliance failure cost data.

Nathan House's Analysis: The ${spendingIncreaseMultiplier}x Privacy Spending Surge

Cisco's 2026 data shows 38% of organisations now spend $5M+ on privacy annually, up from 14% in 2024. That's a 2.7x increase driven largely by AI. 90% of organisations expanded privacy programmes specifically because of AI adoption (Cisco 2026). Cross-referencing with IBM's finding that shadow AI breaches cost $670K more than standard incidents, the spending increase is rational: AI is creating new privacy risks that didn't exist two years ago, and organisations are spending to contain them before regulators act.

The Four Privacy ROI Benefits

Cisco's survey of 2,600+ privacy and security professionals identifies four key benefits that drive the 96% positive ROI finding. Enhanced customer loyalty ranks highest at 79%, followed by improved operational efficiency (78%), increased innovation (78%), and reduced security losses (76%). The message is clear: privacy isn't just a defensive investment — it enables better business outcomes across multiple dimensions.

IBM's data adds the downside: 32% of breached organisations paid regulatory fines, with 25% paying more than $250,000. Organisations without incident response plans paid an average of $2.66 million more per breach. DevSecOps adopters reduced their average breach cost to $3.89 million versus the $4.44 million global average. The data consistently shows that proactive privacy and security investment costs less than reactive incident response.

Regulatory Fines: The Growing Cost of Non-Compliance

IBM's 2025 data reveals a growing regulatory burden on breached organisations. 32% of companies that suffered a breach also paid regulatory fines, with nearly half of those fines exceeding $100,000. The US imposes the highest regulatory penalties among breached organisations, reflecting both CCPA enforcement and sector-specific regulators like the SEC and OCC.

The cost of compliance failure extends beyond fines. IBM calculates that non-compliance adds an average of $1.22 million to total breach costs through remediation requirements, mandatory notification expenses, legal fees, and mandated security improvements. In some jurisdictions, non-compliant organisations face ongoing audit requirements that add further costs for years after the initial breach.

Cost of Getting It Right

- $2.7M average annual privacy spend

- 96% report positive ROI

- $2.66M savings from incident response planning

- $3.89M average breach cost with DevSecOps

Cost of Getting It Wrong

- $1.22M added to breach cost from compliance failures

- 32% of breached organisations paid regulatory fines

- 25% of fines exceeded $250,000

- $4.63M average breach cost with shadow AI

🤖 Data Privacy and AI

Shadow AI breaches cost organisations $4.63 million on average — $670K above the standard average (IBM 2025). 63% of organisations have no formal AI governance policies, and 83% lack controls to prevent employees uploading confidential data to AI tools. Yet 90% of organisations have expanded their privacy programmes specifically because of AI (Cisco 2026).

| Finding | Value | Source |

|---|---|---|

| Average breach cost involving shadow AI | $4.63M | IBM Cost of a Data Breach Report 2025 |

| Organizations lacking AI governance policies | 63% | IBM Cost of a Data Breach Report 2025 |

| No controls to prevent data uploads to AI | 83% | IBM Cost of a Data Breach Report 2025 |

| Planning to reallocate privacy budgets to AI | 99% | Cisco 2025 Data Privacy Benchmark Study |

| Expanded privacy programs due to AI | 90% | Cisco 2026 Data and Privacy Benchmark Study |

| With mature AI governance committees | 12% | Cisco 2026 Data and Privacy Benchmark Study |

| Experienced data exposure from public GenAI | 60% | Reco / State of Shadow AI Report 2025 |

| Projected AI governance spending (2026) | $492M | Gartner |

| Organizations requiring AI use approval | 45% | IBM Cost of a Data Breach Report 2025 |

| Implementing adversarial testing for AI | 22% | IBM Cost of a Data Breach Report 2025 |

AI Privacy Governance Check

Answer these questions to see how your AI governance compares to the benchmark data.

Nathan House's Analysis: The AI Governance Gap

90% of organisations have expanded privacy programmes for AI, but only 12% have mature AI governance committees (Cisco 2026). 63% lack any formal AI governance policy (IBM 2025). This gap is expensive: shadow AI breaches cost $670K more than standard incidents. The EU AI Act's full implementation in August 2026 will add regulatory teeth, with fines up to 7% of global turnover for prohibited AI practices. Organisations that haven't built governance frameworks by then will face both financial and regulatory risk.

60% of organisations have already experienced at least one data exposure event linked to employee use of a public generative AI tool (Reco 2025). Gartner projects AI governance spending will reach $492 million in 2026, reflecting the urgency. The EU AI Act adds another layer: its transparency rules take effect in August 2026, and non-compliance with prohibited AI practices can trigger fines up to 7% of global annual turnover.

The EU AI Act and Privacy

The EU AI Act entered into force on 1 August 2024 and will be fully applicable from August 2026. It supplements GDPR with a risk-based approach to AI regulation. Eight categories of AI practice are banned outright, including social scoring and real-time biometric surveillance in public spaces. High-risk AI systems — including those used in employment, credit scoring, and law enforcement — face mandatory conformity assessments and ongoing monitoring.

For privacy professionals, the AI Act creates a dual compliance challenge. Organisations must satisfy both GDPR's data protection requirements and the AI Act's transparency and safety obligations. The fine structure is aggressive: up to 7% of global annual turnover for prohibited practices (versus GDPR's 4%), up to 3% for other violations, and up to 1.5% for providing incorrect information. An organisation violating both GDPR and the AI Act simultaneously could face combined penalties of up to 11% of global turnover.

Shadow AI: The Invisible Privacy Risk

83% of organisations have no technical controls to prevent employees uploading confidential data to AI tools (IBM 2025). This means customer PII, proprietary information, and regulated data can end up in third-party AI models with no audit trail and no ability to delete it. 97% of AI-related breaches occurred in companies lacking proper access controls. The fix starts with a basic policy: define which AI tools are approved, what data can be shared with them, and how to monitor compliance.

The 99% Privacy-to-AI Budget Shift

99% of organisations plan to reallocate resources from privacy budgets to AI initiatives (Cisco 2025). This statistic deserves careful interpretation. It doesn't mean organisations are cutting privacy — overall privacy spending is rising. It means they're integrating privacy and AI governance into a single function. The organisations getting this right are those treating AI governance as an extension of privacy, not a replacement for it.

AI Governance: What Mature Programmes Look Like

Cisco's 2026 study found that three in four organisations have a dedicated AI governance committee, but only 12% describe those committees as "mature and proactive." The rest are either newly formed, advisory-only, or reactive. Mature programmes share common characteristics: they require impact assessments before AI deployment, maintain inventories of AI systems processing personal data, implement technical controls preventing data uploads to unapproved tools, and conduct regular adversarial testing.

The financial case for mature AI governance is strengthening. Companies with strong AI governance save $287,000 annually compared to those without (Gartner). Gartner projects AI governance spending will hit $492 million in 2026 and surpass $1 billion by 2030. In regulated industries, one in four compliance audits in 2026 will include specific inquiries into AI tool governance and data handling. Organisations without documented AI governance policies will face audit findings and potential enforcement.

Only 12% of organisations have mature, proactive AI governance committees (Cisco 2026)

The practical starting point is straightforward: document which AI tools your organisation uses, what data they process, what controls prevent sensitive data exposure, and who is accountable for AI governance decisions. Only 22% of organisations have implemented adversarial testing for AI (IBM 2025), and only 45% require approval before AI use. These are low-hanging-fruit improvements that significantly reduce privacy risk.

🏥 Data Privacy by Industry

Regulated industries face the highest privacy-related breach costs. Healthcare breaches average $7.42 million (IBM 2025), driven by strict HIPAA requirements and the high value of medical records. Financial services breaches average $5.56 million. Customer PII is the most commonly compromised data type, involved in 53% of all breaches.

| Finding | Value | Source |

|---|---|---|

| Average cost for breaches lasting >200 days | $5.01M | IBM Cost of a Data Breach Report 2025 |

| Breaches involving customer PII | 53% | IBM Cost of a Data Breach Report 2025 |

Breaches that take longer than 200 days to contain cost $5.01 million on average (IBM 2025). The combination of strict regulation, high-value data, and slow detection creates the most expensive breach scenarios. Healthcare organisations should note that OCR imposed 21 HIPAA enforcement penalties in 2025, up from 16 the prior year.

The Regulation-Cost Paradox

Regulated industries pay more per breach — healthcare at $7.42M and financial services at $5.56M versus the $4.44M global average. But regulation also drives better practices: IBM's data shows compliance failures add $1.22M to breach costs. The 27% cost penalty for non-compliance means the question isn't whether to invest in privacy compliance, but how fast you can close existing gaps.

Healthcare: The Costliest Sector

Healthcare has held the top position for breach costs for 14 consecutive years. The sector faces a unique combination of challenges: strict HIPAA regulations, high-value medical records (worth up to 10x more than credit card data on the dark web), and legacy IT systems. In 2024, more than 276 million patient records were compromised — a 64% increase from 2023's record year. Healthcare breaches also take longer to detect, averaging 213 days before discovery versus 194 days across other industries.

Financial Services: Regulatory Pressure

Financial services is the second costliest industry for data breaches at $5.56 million per incident. The sector faces overlapping regulations — GDPR, PCI DSS, SOX, and sector-specific rules from financial regulators. Stolen credentials remain the top attack vector in financial services, and the industry's rapid adoption of AI tools has created new data privacy concerns around customer profiling and automated decision-making.

Highest Breach Cost Industries

- Healthcare — $7.42M average

- Financial services — $5.56M

- Critical infrastructure — $4.82M

- Education — $3.80M

Key Privacy Drivers

- HIPAA, PCI DSS, SOX regulation

- Customer PII in 53% of breaches

- 213-day average detection in healthcare

- 21 HIPAA enforcement penalties in 2025

Education and Public Sector

Education ranks fourth for breach costs at $3.80 million per incident. Schools and universities collect vast amounts of student data — including minors' data — making them subject to both general privacy laws (GDPR, CCPA) and sector-specific regulations (FERPA in the US, UK Children's Code). The shift to hybrid learning has expanded the attack surface, with student data now distributed across dozens of third-party educational technology platforms.

The public sector faces unique data privacy challenges. Government agencies hold sensitive citizen data but often operate with smaller security budgets and legacy infrastructure. The EU's GDPR applies equally to public bodies, with several European government agencies receiving fines for inadequate security measures and excessive data collection. US government agencies must comply with state privacy laws when handling resident data, adding another layer of complexity.

Nathan House's Analysis: Regulation-Driven Industry Costs

The correlation between regulatory stringency and breach costs isn't a coincidence — it's causal in both directions. Regulated industries face higher breach costs partly because regulators impose additional penalties and remediation requirements. But they also face higher costs because they process more sensitive data that attracts more sophisticated attackers. The data protection statistics show that regulation increases both the floor and ceiling of breach costs, making proactive investment even more critical in heavily regulated sectors.

📝 Data Privacy Trends: Key Takeaways

The data privacy landscape in 2026 is defined by three converging forces: near-universal legislation, AI-driven complexity, and consumers who increasingly vote with their wallets. Here's what the data tells us about where privacy is heading.

- Privacy law coverage is near-universal. 172 countries (79% worldwide) have data protection laws, and the focus is shifting from enactment to enforcement and strengthening.

- GDPR enforcement keeps accelerating. €7.1B in cumulative fines, 400+ daily breach notifications, and Ireland's DPC single-handedly issuing €3.5B in penalties against Big Tech.

- Cross-border data transfers are the biggest risk. Six of the seven largest GDPR fines relate to moving EU data to the US or China without adequate safeguards.

- The US patchwork grows more complex. 20 states have comprehensive privacy laws with no federal standard, creating compliance chaos for national businesses.

- Privacy investment pays for itself. 96% report positive ROI (median 1.6x), while compliance failures add $1.22M to breach costs.

- AI is the new privacy frontier. 90% of organisations expanded privacy programmes for AI, but only 12% have mature governance. Shadow AI breaches cost $670K more.

- Consumers are voting with their wallets. 82% are concerned about data use, 75% won't buy from distrusted companies, and 48% have already walked away.

- Children's data is an enforcement priority. Three of the top 10 GDPR fines target children's data violations, totalling over €1.28 billion.

- The privacy spending surge is AI-driven. 38% of organisations now spend $5M+ on privacy, up 2.7x from 2024, driven by AI governance needs.

Looking Ahead: 2026 and Beyond

Three developments will shape data privacy in the coming year. First, the EU AI Act reaches full applicability in August 2026, adding AI-specific privacy obligations with fines up to 7% of global turnover. Second, the US state privacy patchwork will continue growing — at least 5 more states are expected to pass comprehensive privacy laws in 2026-2027. Third, AI governance will move from an aspiration to a compliance requirement, as regulators worldwide begin treating uncontrolled AI data processing as a privacy violation.

For organisations, the path forward is clear: invest in privacy (the ROI is proven), build AI governance frameworks now (before regulation forces it), and treat consumer trust as a measurable business metric (because 75% of consumers will walk away if you don't).

Privacy Compliance Priorities

Map your data flows and legal basis

Know what personal data you collect, where it goes (especially cross-border), and which legal basis supports each processing activity. The top GDPR fines are for missing or inadequate legal basis.

Build AI governance before regulators force it

63% lack AI governance policies (IBM). Create an AI tool inventory, implement upload controls, and require approval before deployment. The EU AI Act makes this mandatory from August 2026.

Invest in incident response planning

Organisations with IR plans save $2.66M per breach (IBM). With 400+ daily breach notifications under GDPR, the question is when, not if, you'll need one.

Make privacy visible to customers

75% won't buy from distrusted companies. Clear consent mechanisms, genuine opt-out options, and transparent data practices directly protect revenue.

Monitor the US state patchwork

20 states and growing. If you operate nationally, you need a compliance strategy that covers all active state privacy laws, not just CCPA.

Nathan House's Analysis: The Three Convergences

Three data points define where privacy is heading. One: Cisco shows 96% positive privacy ROI, proving investment works. Two: IBM shows $670K additional cost from shadow AI breaches, proving unmanaged AI is expensive. Three: IAPP shows 48% of consumers have already stopped buying over privacy concerns, proving trust impacts revenue. The organisations that connect these three dots — privacy investment, AI governance, and consumer trust — will outperform those that treat privacy as a compliance checkbox.

❓ Data Privacy Statistics FAQ

How many countries have data privacy laws?

172 countries have enacted data protection or privacy legislation as of 2025, covering 79% of all UN member states (Greenleaf 2025). The pace of adoption accelerated significantly after the EU's GDPR was introduced in 2018, with 12 new countries passing laws in 2023-24 alone. Key global frameworks include the EU GDPR, Brazil's LGPD, China's PIPL, India's DPDPA, and South Africa's POPIA.

How much have GDPR fines totalled?

GDPR fines have reached €7.1 billion cumulatively since May 2018 (DLA Piper 2026). 2,679 individual fines have been issued. The largest single fine was Meta's €1.2 billion penalty for transferring EU user data to the US without adequate safeguards. Ireland's Data Protection Commission has issued €3.5 billion in fines — nearly half the total — because most Big Tech companies have their European headquarters there.

How many US states have privacy laws?

20 US states have comprehensive data privacy laws as of 2026 (IAPP/MultiState). The most recent states to take effect are Indiana, Kentucky, and Rhode Island (January 2026). California's CCPA/CPRA remains the strictest framework, with the CPPA actively investigating hundreds of cases and issuing fines up to $7,988 per intentional violation. There is no federal privacy law.

What is the ROI of privacy investment?

96% of organisations say their privacy investment returns exceed costs, with a median return of 1.6x (Cisco 2025 Privacy Benchmark Study). Average annual privacy spending is $2.7 million across surveyed organisations. Benefits include enhanced customer loyalty (79%), improved operational efficiency (78%), increased innovation (78%), and reduced security losses (76%). Conversely, compliance failures add an average of $1.22 million to data breach costs (IBM 2025).

How does AI affect data privacy?

AI creates new privacy risks and drives increased investment. Shadow AI breaches cost organisations $4.63 million on average — $670K above standard incidents (IBM 2025). 63% of organisations have no formal AI governance policies, and 83% lack controls to prevent data uploads to AI tools. 90% of organisations have expanded privacy programmes specifically because of AI (Cisco 2026). The EU AI Act, fully applicable from August 2026, adds regulatory enforcement with fines up to 7% of global turnover for prohibited AI practices.

What is the largest GDPR fine ever issued?

The largest GDPR fine ever issued was €1.2 billion against Meta Platforms in May 2023 by Ireland's Data Protection Commission. Meta was fined for transferring European user data to the United States without adequate safeguards after the Schrems II ruling invalidated the Privacy Shield framework. Meta was ordered to suspend transatlantic data flows within six months. The second and third largest GDPR fines are Amazon's €746 million and TikTok's €530 million.

What are the data privacy trends for 2026?

Key data privacy trends for 2026 include the EU AI Act reaching full applicability in August 2026, continued growth in US state-level privacy laws (20 states now have comprehensive laws), a 2.7x increase in organisations spending $5M+ on privacy (driven by AI), and 400+ daily GDPR breach notifications. Consumer privacy awareness continues to rise, with 82% of internet users concerned about data collection and 48% already taking purchasing action based on privacy practices. AI governance is becoming a compliance requirement rather than optional.

What is the difference between GDPR and CCPA?

GDPR (EU) and CCPA/CPRA (California) are the two most significant privacy frameworks but differ in scope and enforcement. GDPR applies to all organisations processing EU residents' data globally, with fines up to 4% of global turnover or €20 million. CCPA applies to businesses meeting specific revenue or data volume thresholds in California, with fines of $2,663 to $7,988 per violation. GDPR requires explicit consent for most data processing, while CCPA focuses on the right to opt out of data sales. GDPR has generated €7.1 billion in fines since 2018, while CCPA's largest fine to date is $1.35 million.

About This Data

This article draws from 51 statistics aggregated from 50+ authoritative sources including IBM Cost of a Data Breach, Verizon DBIR, CrowdStrike Global Threat Report, WEF Global Cybersecurity Outlook, FBI IC3, ISC2 Cybersecurity Workforce Study, Sophos, Gartner, Mandiant M-Trends, and Ponemon Institute reports.

Derived statistics (marked "Nathan House's Analysis") are computed by cross-referencing data from multiple sources — for example, comparing breach costs across industries using IBM data, or validating ransomware trends across Verizon, Sophos, and HIPAA Journal findings.

All statistics include inline source citations with links to primary sources. Data spans 2023-2026, with preference given to the most recent available figures. Last updated: May 2026.

About the Author

Nathan House, StationX

Nathan House is a cybersecurity expert with 30 years of hands-on experience. He holds OSCP, CISSP, and CEH certifications, has secured £71 billion in UK mobile banking transactions, and has worked with clients including Microsoft, Cisco, BP, Vodafone, and VISA. Named Cyber Security Educator of the Year 2020 and a UK Top 25 Security Influencer 2025, Nathan is a featured expert on CNN, Fox News, and NBC. He founded StationX, which has trained over 500,000 students in cybersecurity.

Related Articles

100+ data breach statistics — costs, causes, and trends.

500+ cybercrime facts covering breach costs, ransomware, and phishing.

50+ identity theft statistics — fraud losses, demographics, and trends.

90+ security spending statistics — budgets, ROI, and benchmarks.